Article Navigation >

Journal of Semiconductors

>

2026

> Accepted Manuscript

| Citation: |

Ye Lin, Boheng Jiang, Jiayuan Chen, Dawei Li, Yuan Du, Li Du. Challenges and trends of analog computing-in- memory (ACIM)[J]. Journal of Semiconductors, 2026, In Press. doi: 10.1088/1674-4926/26020042

****

Y Lin, B H Jiang, J Y Chen, D W Li, Y Du, and L Du, Challenges and trends of analog computing-in- memory (ACIM)[J]. J. Semicond., 2026, accepted doi: 10.1088/1674-4926/26020042

|

Challenges and trends of analog computing-in- memory (ACIM)

DOI: 10.1088/1674-4926/26020042

CSTR: 32376.14.1674-4926.26020042

More Information-

References

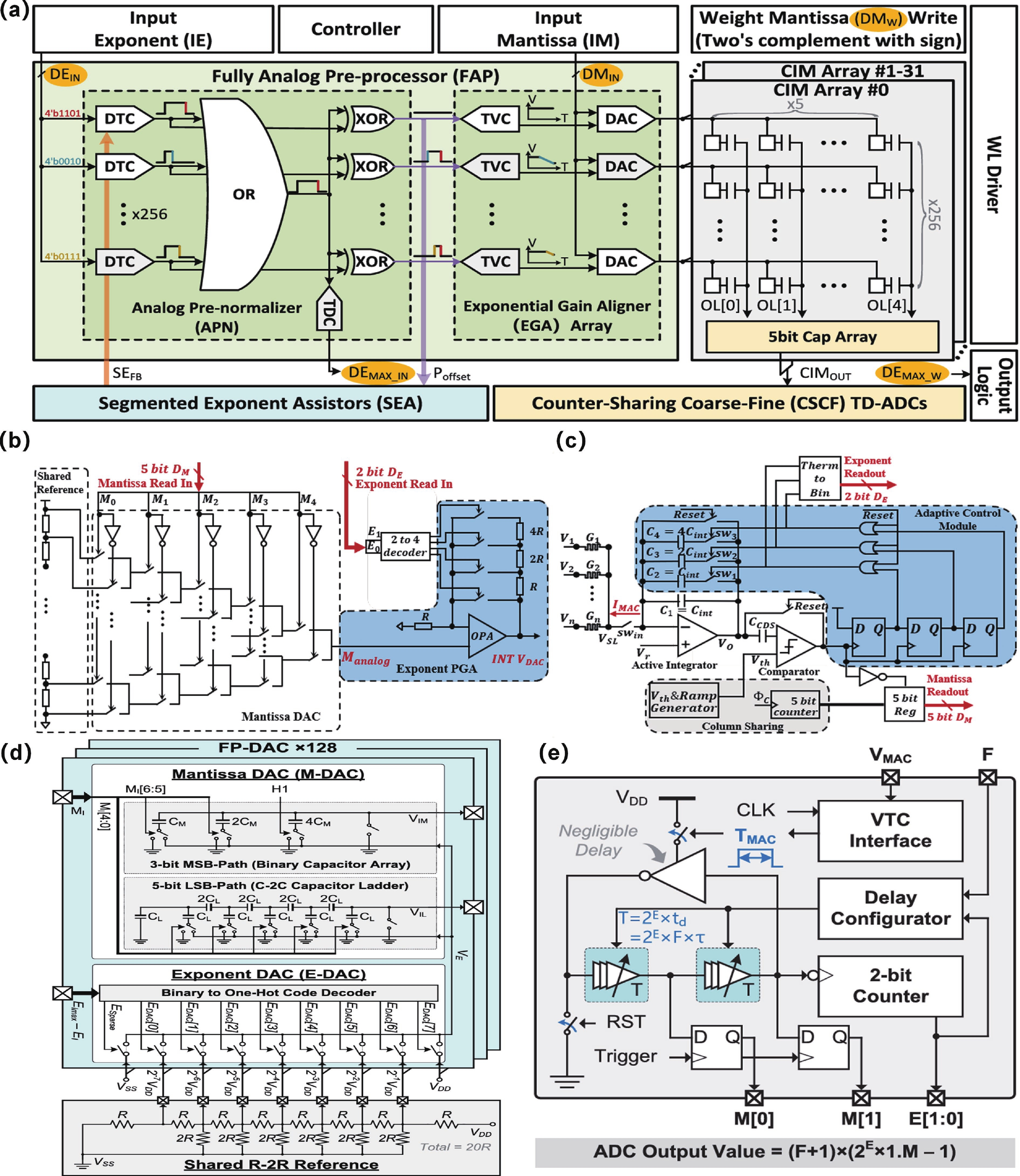

[1] Lin Y, Li Y D, Zhang H, et al. An 11T1C bit-level-sparsity-aware computing-in-memory macro with adaptive conversion time and computation voltage. IEEE Trans Circuits Syst I Regul Pap, 2024, 71(11): 4985 doi: 10.1109/TCSI.2024.3419902[2] Zhang H, Yin W H, He S N, et al. An efficient two-stage pipelined compute-in-memory macro for accelerating transformer feed-forward networks. IEEE Trans Very Large Scale Integr VLSI Syst, 2024, 32(10): 1889 doi: 10.1109/TVLSI.2024.3432403[3] Zhang H, He S N, Lu X, et al. SSM-CIM: An efficient CIM macro featuring single-step multi-bit MAC computation for CNN edge inference. IEEE Trans Circuits Syst I Regul Pap, 2023, 70(11): 4357 doi: 10.1109/TCSI.2023.3301814[4] Yao J T, Yang Z Y, Jiang Y X, et al. CDACiM: A charge-domain compute-in-memory macro for FP/INT MAC operations with reconfigurable capacitor digital-analog-converter. 2026 31st Asia and South Pacific Design Automation Conference (ASP-DAC), 2026: 844[5] Tang Y C, Zhang Y Q, Liu Z C, et al. Challenges and trends of SRAM based floating point computing-in-memory circuits. 2025 IEEE 16th International Conference on ASIC (ASICON), 2026: 1[6] Diao H K, Luo H Y, Song J H, et al. A computing-in-memory engine supporting one-shot floating-point NN inference and on-device fine-tuning for edge AI. IEEE J Solid State Circuits, 2025, 60(9): 3403 doi: 10.1109/JSSC.2024.3522304[7] Wang W C, Zhang S D, Shamieh L, et al. MIX-ACIM: A 28-nm mixed-precision analog compute-in-memory with digital feature restoration for vector-matrix multiplication. IEEE Solid State Circuits Lett, 2025, 8: 213 doi: 10.1109/LSSC.2025.3590757[8] Shafiee A, Nag A, Muralimanohar N, et al. ISAAC: A convolutional neural network accelerator with in-situ analog arithmetic in crossbars. ACM SIGARCH Comput Archit News, 2016, 44(3): 14 doi: 10.1145/3007787.3001139[9] Zuo P S, Wang Q S, Luo Y B, et al. Precise and scalable analogue matrix equation solving using resistive random-access memory chips. Nat Electron, 2025, 8(12): 1222 doi: 10.1038/s41928-025-01477-0[10] Yangdong X J, Wang C, Zhao Y C, et al. Ultrahigh-precision analog computing using memory-switching geometric ratio of transistors. Sci Adv, 2025, 11(37): eady4798 doi: 10.1126/sciadv.ady4798[11] Zhang H, Bai Y C, Shen J J, et al. Linearity performance of charge domain in-memory computing: Analysis and calibration. Integr Circuits Syst, 2024, 1(1): 43 doi: 10.23919/ICS.2024.3422968[12] Liu Y, Zhang W Q, Song X F, et al. Error-corrected RRAM-based compute-in-memory accelerator using voltage-clamp current mirror. IEEE Trans Device Mater Relib, 2025, 25(4): 788 doi: 10.1109/TDMR.2025.3612983[13] Xie C J, Shao Z, Zhang M Z, et al. RAC-NAF: A reconfigurable analog circuitry for nonlinear activation function computation in computing-in-memory. IEEE J Solid State Circuits, 2025, 60(10): 3738 doi: 10.1109/JSSC.2025.3563387[14] Yang J Y, Luo X Y, Ke Y, et al. A 33.6–136.2-TOPS/W nonlinear analog computing-in-memory macro for multi-bit LSTM accelerator in 65-nm CMOS. IEEE J Solid State Circuits, 2025: 1[15] Jiang L J, Zhou Y T, Zhang H, et al. Self-calibrating analog circuitry for softmax-scaled function with analog computing-In-memory. IEEE Trans Very Large Scale Integr VLSI Syst, 2026, 34(3): 1067 doi: 10.1109/TVLSI.2026.3651307[16] He P Y, Zhao Y Z, Xie H, et al. A reconfigurable floating-point compute-in-memory with analog exponent preprocesses. IEEE Solid State Circuits Lett, 2024, 7: 271 doi: 10.1109/LSSC.2024.3463208[17] Liu H B, Qian Z Y, Wu W, et al. AFPR-CIM: An analog-domain floating-point RRAM-based compute- in- memory architecture with dynamic range adaptive FP-ADC. 2024 Design, Automation & Test in Europe Conference & Exhibition (DATE), 2024: 1[18] Zhao Y Z, Wang Y, Martins R P, et al. A hierarchical-hybrid floating-point compute-in-memory macro using FP-DAC and FP-ADC for edge-AI devices. IEEE J Solid-State Circuits, 2025: 1 -

Proportional views

Ye Lin received the B.S. degree in electronic information science and technology from Ningbo University, Ningbo, China, in 2023. He is currently pursuing the M.S. degree with the School of Electronic Science and Engineering, Nanjing University, Nanjing, China. His research interests include analog and mixed-signal circuits for deep learning accelerators.

Ye Lin received the B.S. degree in electronic information science and technology from Ningbo University, Ningbo, China, in 2023. He is currently pursuing the M.S. degree with the School of Electronic Science and Engineering, Nanjing University, Nanjing, China. His research interests include analog and mixed-signal circuits for deep learning accelerators. Boheng Jiang received the B.S. degree from the School of Electronic Science and Engineering, Nanjing University, Nanjing, China, in 2025. He is currently pursuing the M.S. degree with the School of Electronic Science and Engineering, Nanjing University, Nanjing, China. His research interests include charge trap transistors (CTTs) and floating-point computing-in-memory (FP-CIM).

Boheng Jiang received the B.S. degree from the School of Electronic Science and Engineering, Nanjing University, Nanjing, China, in 2025. He is currently pursuing the M.S. degree with the School of Electronic Science and Engineering, Nanjing University, Nanjing, China. His research interests include charge trap transistors (CTTs) and floating-point computing-in-memory (FP-CIM). Jiayuan Chen received her Bachelor’s degree from the Department of Information Engineering, Nanjing University of Posts and Telecommunications in 2003, and her Ph.D. degree from the Department of Electronic and Electrical Engineering from University College London (UCL), United Kingdom, in 2008. From September 2009 to January 2011, she joined the Innovation Lab of Alcatel-Lucent Shanghai Bell as a Research Scientist. Since March 2011, she has been with the Network and IT Technology Research Institute, China Mobile Research Institution, where her research focuses on GPU scale-up interconnection, AI infrastructure and NFV/SDN network transformation.

Jiayuan Chen received her Bachelor’s degree from the Department of Information Engineering, Nanjing University of Posts and Telecommunications in 2003, and her Ph.D. degree from the Department of Electronic and Electrical Engineering from University College London (UCL), United Kingdom, in 2008. From September 2009 to January 2011, she joined the Innovation Lab of Alcatel-Lucent Shanghai Bell as a Research Scientist. Since March 2011, she has been with the Network and IT Technology Research Institute, China Mobile Research Institution, where her research focuses on GPU scale-up interconnection, AI infrastructure and NFV/SDN network transformation. Dawei Li (Member, IEEE) received the B.S. degree in elec-trical engineering from the Wuhan University of Science and Technology, Wuhan, China, in 2010, and the Ph.D. degree in electrical engineering from the Huazhong University of Science and Technology, Wuhan, in 2017. He is currently an Associate Professor with South-Central Minzu University, Wuhan. His current research interests in-clude wireline & wireless & power management.

Dawei Li (Member, IEEE) received the B.S. degree in elec-trical engineering from the Wuhan University of Science and Technology, Wuhan, China, in 2010, and the Ph.D. degree in electrical engineering from the Huazhong University of Science and Technology, Wuhan, in 2017. He is currently an Associate Professor with South-Central Minzu University, Wuhan. His current research interests in-clude wireline & wireless & power management. Yuan Du (S’14-M’17-SM’21) received his B.S. degree from Southeast University (SEU), Nanjing, China, in 2009, his M.S. and his Ph.D. degree both from Electrical Engineering Department, University of California, Los Angeles (UCLA), in 2012 and 2016, respectively. Since 2019, he has been with Nanjing University, Nanjing, China, as an Associate Professor. He worked for Kneron Inc., San Diego, CA, USA from 2016 to 2019, as a leading hardware architect. His current research interests include designs of machine-learning hardware accelerators, high-speed inter-chip/intra-chip interconnects, and RFICs. He was the recipient of the Microsoft Research Asia Young Fellow (2008), Southeast University Chancellor’s Award (2009), Broadcom Young Fellow (2015), and IEEE Circuits and Systems Society Darlington Best Paper Award (2021).

Yuan Du (S’14-M’17-SM’21) received his B.S. degree from Southeast University (SEU), Nanjing, China, in 2009, his M.S. and his Ph.D. degree both from Electrical Engineering Department, University of California, Los Angeles (UCLA), in 2012 and 2016, respectively. Since 2019, he has been with Nanjing University, Nanjing, China, as an Associate Professor. He worked for Kneron Inc., San Diego, CA, USA from 2016 to 2019, as a leading hardware architect. His current research interests include designs of machine-learning hardware accelerators, high-speed inter-chip/intra-chip interconnects, and RFICs. He was the recipient of the Microsoft Research Asia Young Fellow (2008), Southeast University Chancellor’s Award (2009), Broadcom Young Fellow (2015), and IEEE Circuits and Systems Society Darlington Best Paper Award (2021). Li Du (M’16) received his B.S degree from Southeast University, Nanjing, China, in 2011, and his Ph.D. degree in Electrical Engineering from the University of California, Los Angeles, CA, USA, in 2016. From June 2013 to Sept 2016, he worked at Qualcomm Inc., San Diego, CA, USA, designing mixed-signal circuits for cellular communications. From Sept. 2016 to Oct. 2018, he worked at Kneron Inc., San Diego, CA, USA, as a hardware architect research scientist, designing high-performance artificial intelligence (AI) hardware accelerators. After that, he joined Xin Yun Tech Inc., Westlake, CA, USA, in charge of high-speed analog circuits design for 100G/400G optical communication. Currently, he is a professor in the department of School of Integrated Circuits at Nanjing University. His research includes analog sensing circuit design, in-memory computing design, and a high-performance AI processor for edge sensing.

Li Du (M’16) received his B.S degree from Southeast University, Nanjing, China, in 2011, and his Ph.D. degree in Electrical Engineering from the University of California, Los Angeles, CA, USA, in 2016. From June 2013 to Sept 2016, he worked at Qualcomm Inc., San Diego, CA, USA, designing mixed-signal circuits for cellular communications. From Sept. 2016 to Oct. 2018, he worked at Kneron Inc., San Diego, CA, USA, as a hardware architect research scientist, designing high-performance artificial intelligence (AI) hardware accelerators. After that, he joined Xin Yun Tech Inc., Westlake, CA, USA, in charge of high-speed analog circuits design for 100G/400G optical communication. Currently, he is a professor in the department of School of Integrated Circuits at Nanjing University. His research includes analog sensing circuit design, in-memory computing design, and a high-performance AI processor for edge sensing.

DownLoad:

DownLoad: