| Citation: |

Heyi Huang, Chen Ge, Zhuohui Liu, Hai Zhong, Erjia Guo, Meng He, Can Wang, Guozhen Yang, Kuijuan Jin. Electrolyte-gated transistors for neuromorphic applications[J]. Journal of Semiconductors, 2021, 42(1): 013103. doi: 10.1088/1674-4926/42/1/013103

****

H Y Huang, C Ge, Z H Liu, H Zhong, E J Guo, M He, C Wang, G Z Yang, K J Jin, Electrolyte-gated transistors for neuromorphic applications[J]. J. Semicond., 2021, 42(1): 013103. doi: 10.1088/1674-4926/42/1/013103.

|

Electrolyte-gated transistors for neuromorphic applications

DOI: 10.1088/1674-4926/42/1/013103

More Information

-

Abstract

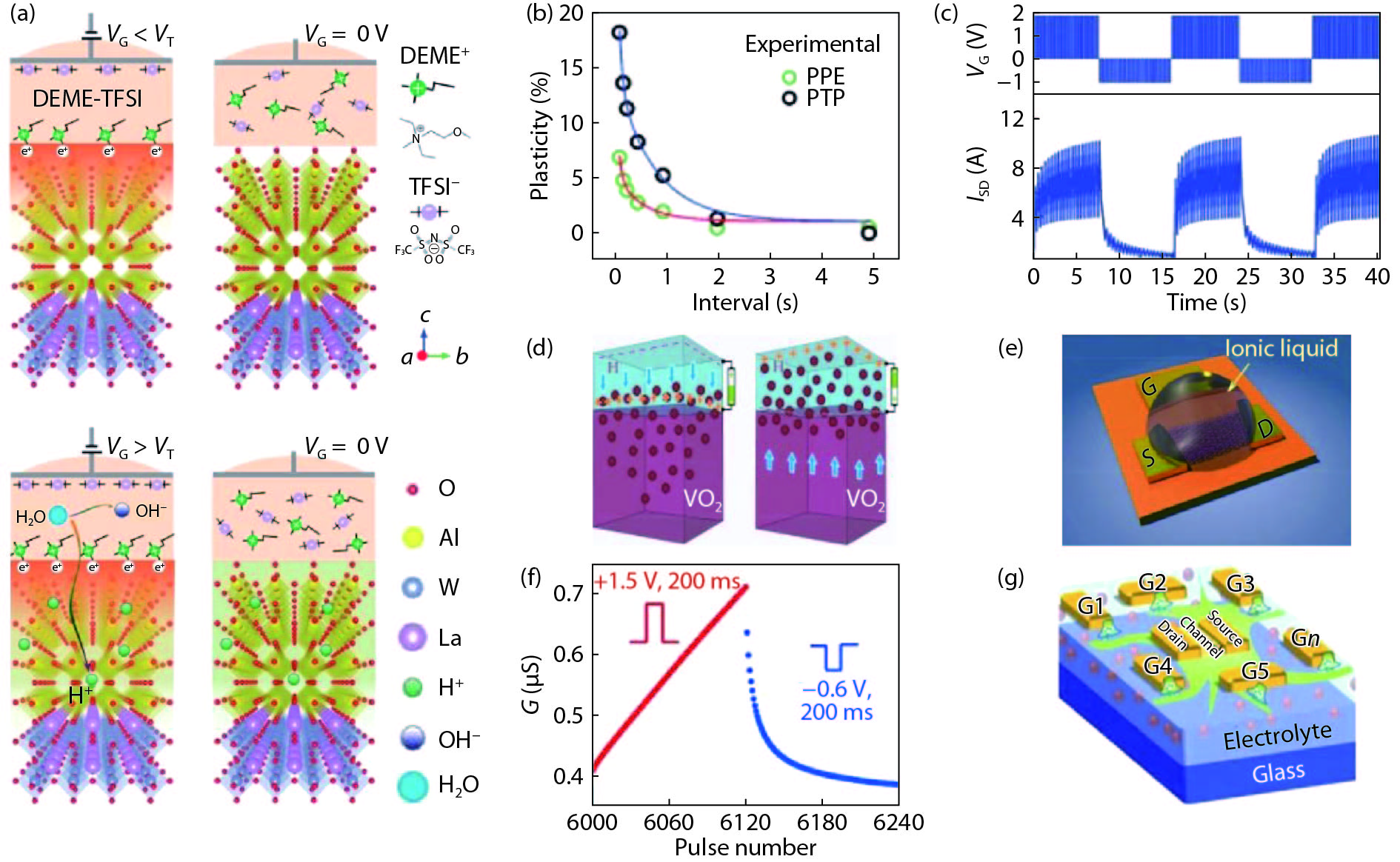

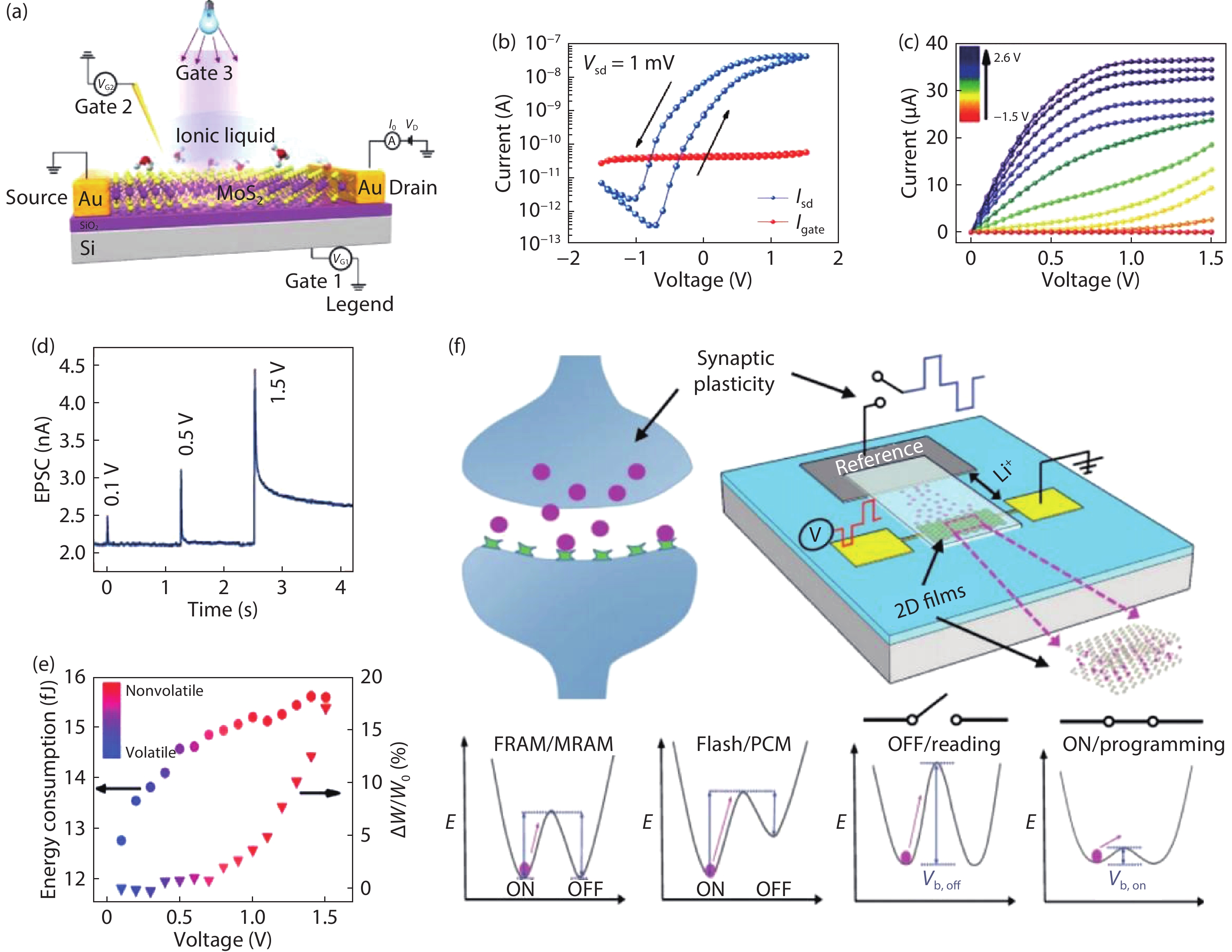

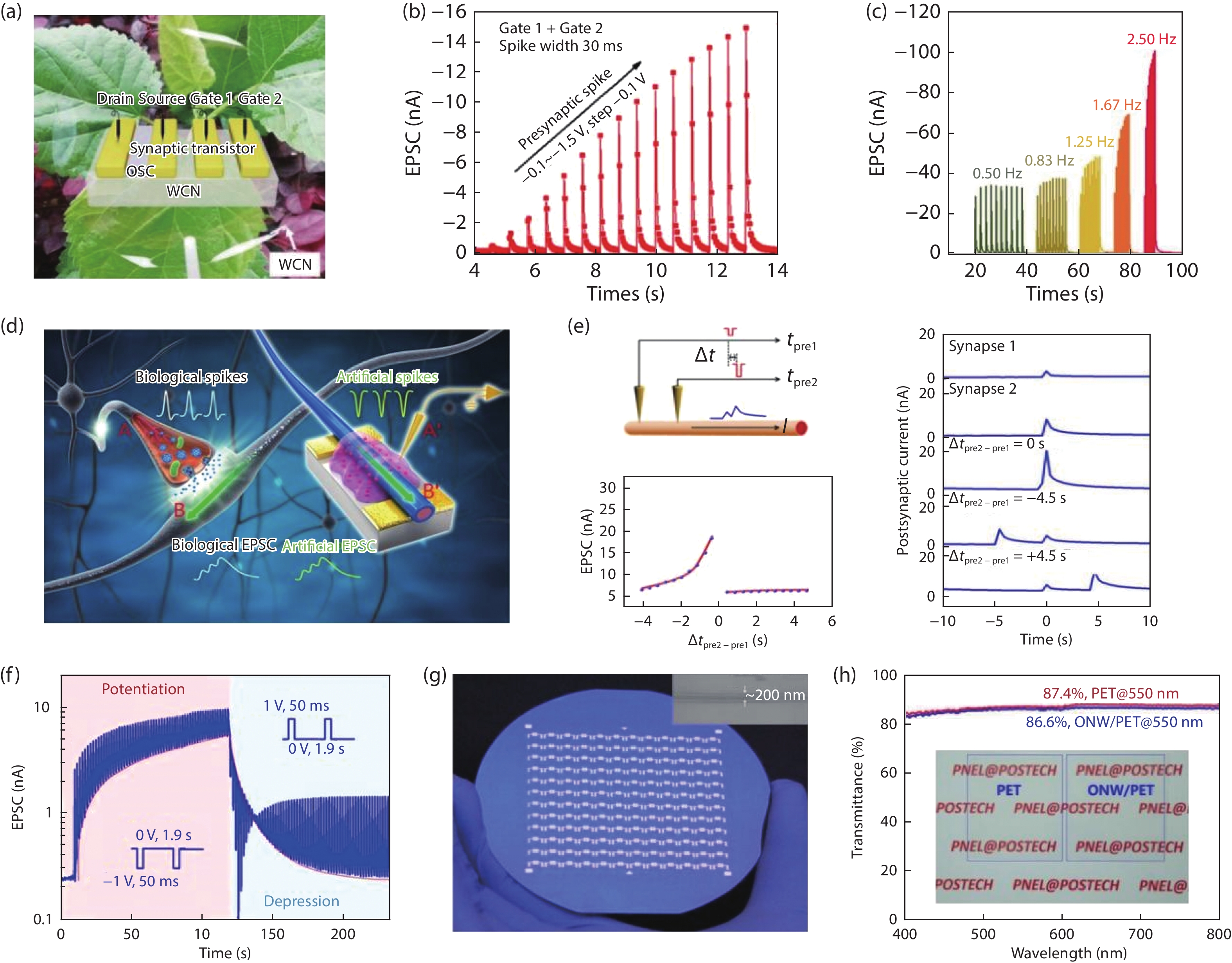

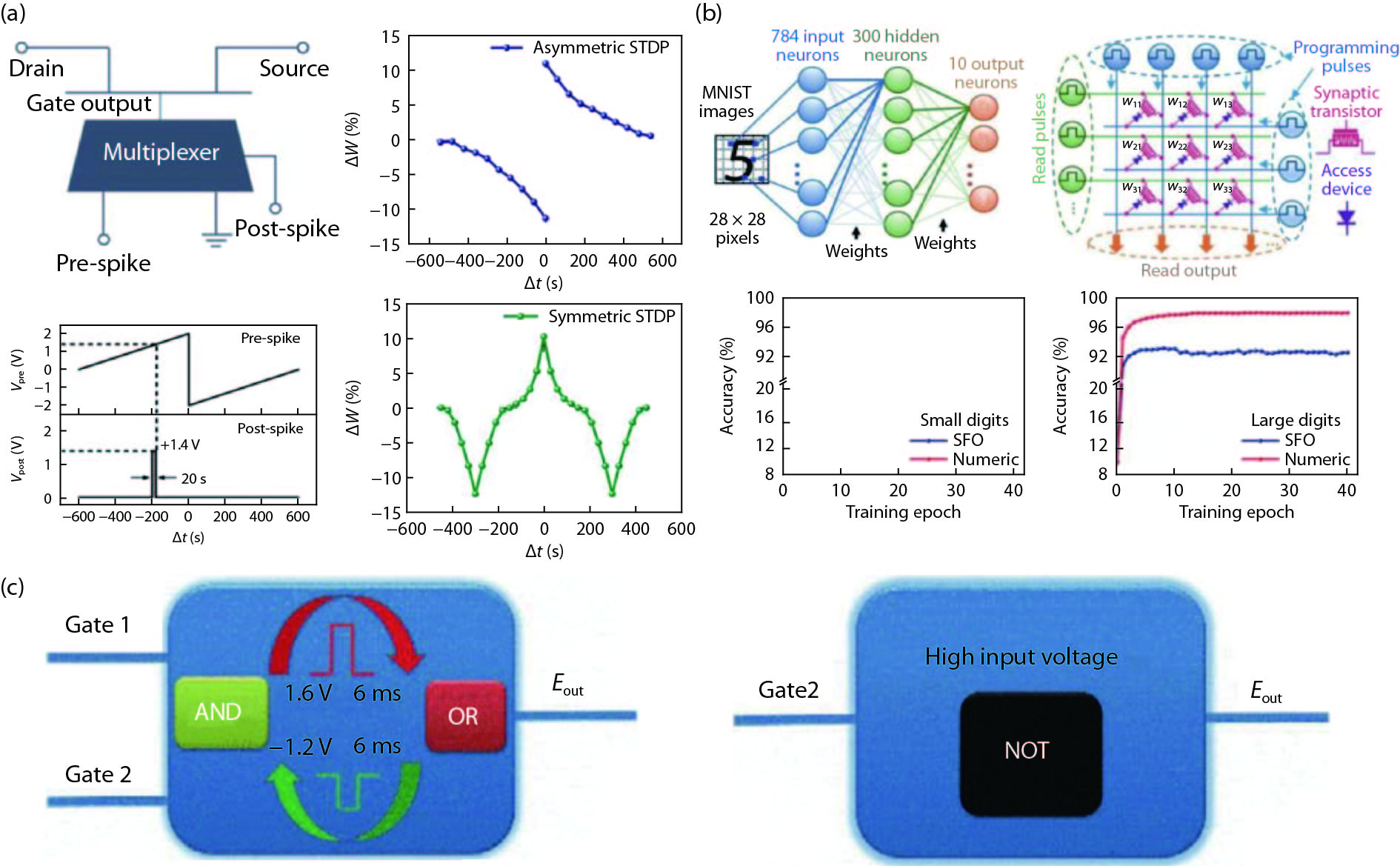

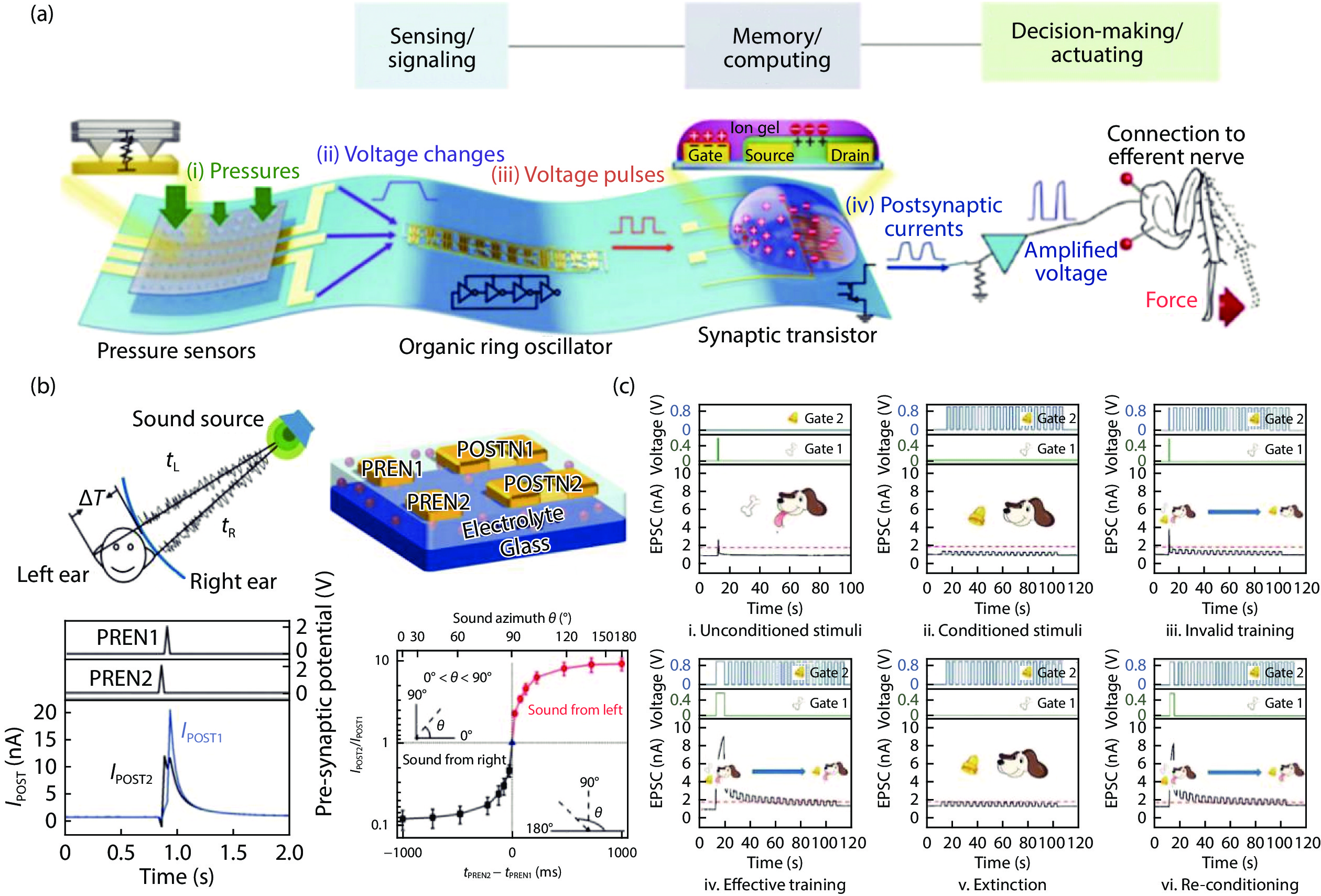

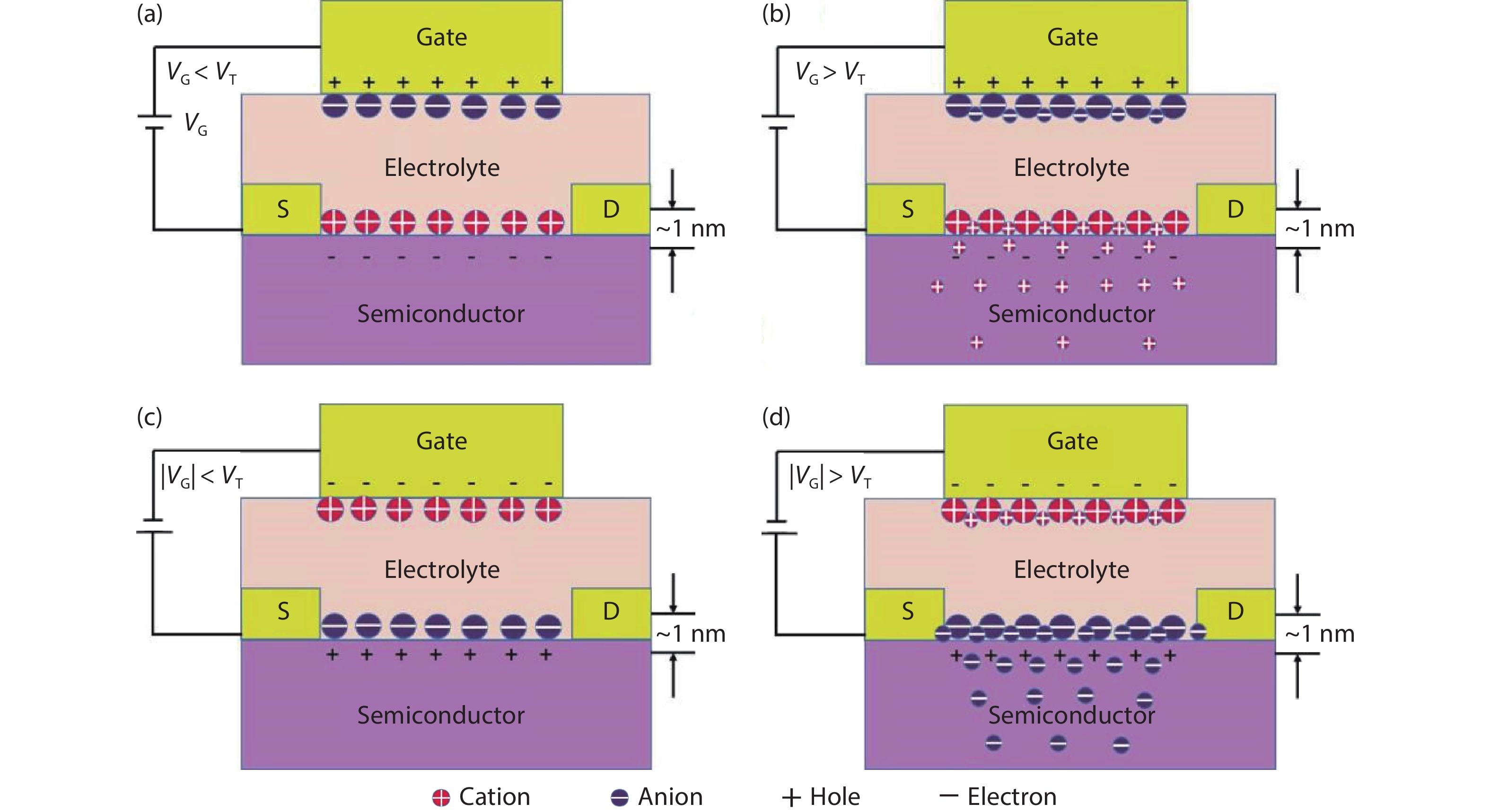

Von Neumann computers are currently failing to follow Moore’s law and are limited by the von Neumann bottleneck. To enhance computing performance, neuromorphic computing systems that can simulate the function of the human brain are being developed. Artificial synapses are essential electronic devices for neuromorphic architectures, which have the ability to perform signal processing and storage between neighboring artificial neurons. In recent years, electrolyte-gated transistors (EGTs) have been seen as promising devices in imitating synaptic dynamic plasticity and neuromorphic applications. Among the various electronic devices, EGT-based artificial synapses offer the benefits of good stability, ultra-high linearity and repeated cyclic symmetry, and can be constructed from a variety of materials. They also spatially separate “read” and “write” operations. In this article, we provide a review of the recent progress and major trends in the field of electrolyte-gated transistors for neuromorphic applications. We introduce the operation mechanisms of electric-double-layer and the structure of EGT-based artificial synapses. Then, we review different types of channels and electrolyte materials for EGT-based artificial synapses. Finally, we review the potential applications in biological functions. -

References

[1] Ho V M, Lee J A, Martin K C. The cell biology of synaptic plasticity. Science, 2011, 334(6056), 623 doi: 10.1126/science.1209236[2] He Y, Yang Y, Nie S, et al. Electric-double-layer transistors for synaptic devices and neuromorphic systems. J Mater Chem C, 2018, 6, 5336 doi: 10.1039/C8TC00530C[3] Zhong H, Sun Q C, Li G. High-performance synaptic transistors for neuromorphic computing. Chin Phys B, 2020, 29(4), 040703 doi: 10.1088/1674-1056/ab7806[4] Dai S, Zhao Y, Wang Y, et al. Recent advances in transistor-based artificial synapses. Adv Funct Mater, 2019, 29(42), 1903700 doi: 10.1002/adfm.201903700[5] Wang Z, Wang L, Nagai M, et al. Nanoionics-enabled memristive devices: Strategies and materials for neuromorphic applications. Adv Electron Mater, 2017, 3(7), 1600510 doi: 10.1002/aelm.201600510[6] Basu A, Acharya J, Karnik T. Low-power, adaptive neuromorphic systems: recent progress and future directions. IEEE J Emerg Sel Top Circuits Syst, 2018, 8, 6 doi: 10.1109/JETCAS.2018.2816339[7] Sun L, Wang W, Yang H. Recent progress in synaptic devices based on 2D materials. Adv Intell Syst, 2020, 2(5), 1900167 doi: 10.1002/aisy.201900167[8] Li J, Ge C, Lu H, et al. Energy-efficient artificial synapses based on oxide tunnel junctions. ACS Appl Mater Interfaces, 2019, 11, 43473 doi: 10.1021/acsami.9b13434[9] Hu J, Stoffels S, Lenci S, et al. Statistical analysis of the impact of anode recess on the electrical characteristics of AlGaN/GaN Schottky diodes with gated edge termination. IEEE Trans Electron Devices, 2016, 63(9), 3451 doi: 10.1109/TED.2016.2587103[10] Prezioso M, Merrikh-Bayat F, Hoskins B D, et al. Training and operation of an integrated neuromorphic network based on metal-oxide memristors. Nature, 2015, 521(7550), 61 doi: 10.1038/nature14441[11] Jo S H, Chang T, Ebong I, et al. Nanoscale memristor device as synapse in neuromorphic systems. Nano Lett, 2010, 10(4), 1297 doi: 10.1021/nl904092h[12] Xia Q F, Yang J J. Memristive crossbar arrays for brain-inspired computing. Nat Mater, 2019, 18, 309 doi: 10.1038/s41563-019-0291-x[13] Tuma T, Pantazi A, Le Gallo M, et al. Stochastic phase-change neurons. Nat Nanotechnol, 2016, 11(8), 693 doi: 10.1038/nnano.2016.70[14] Kent A D, Worledge D C. A new spin on magnetic memories. Nat Nanotechnol, 2015, 10(3), 187 doi: 10.1038/nnano.2015.24[15] Mizrahi A, Hirtzlin T, Fukushima A, et al. Neural-like computing with populations of superparamagnetic basis functions. Nat Commun, 2018, 9(1), 1533 doi: 10.1038/s41467-018-03963-w[16] Zhong H, Wen Y, Zhao Y, et al. Ten states of nonvolatile memory through engineering ferromagnetic remanent magnetization. Adv Funct Mater, 2019, 29(2), 1806460 doi: 10.1002/adfm.201806460[17] Chanthbouala A, Garcia V, Cherifi R O, et al. A ferroelectric memristor. Nat Mater, 2012, 11(10), 860 doi: 10.1038/nmat3415[18] Li J, Li N, Ge C, et al. Giant electroresistance in ferroionic tunnel junctions. iScience, 2019, 16, 368 doi: 10.1016/j.isci.2019.05.043[19] Li J, Ge C, Du J, et al. Reproducible ultrathin ferroelectric domain switching for high-performance neuromorphic computing. Adv Mater, 2020, 32(7), e1905764 doi: 10.1002/adma.201905764[20] Han H, Yu H, Wei H, et al. Recent progress in three-terminal artificial synapses: From device to system. Small, 2019, 15(32), 1970170 doi: 10.1002/smll.201970170[21] Shi J, Ha S D, Zhou Y, et al. A correlated nickelate synaptic transistor. Nat Commun, 2013, 4, 2676 doi: 10.1038/ncomms3676[22] Kim S H, Hong K, Xie W, et al. Electrolyte-gated transistors for organic and printed electronics. Adv Mater, 2013, 25(13), 1822 doi: 10.1002/adma.201202790[23] Dhoot A S, Israel C, Moya X, et al. Large electric field effect in electrolyte-gated manganites. Phys Rev Lett, 2009, 102, 136402 doi: 10.1103/PhysRevLett.102.136402[24] Kim M K, Lee J S. Ferroelectric analog synaptic transistors. Nano Lett, 2019, 19(3), 2044 doi: 10.1021/acs.nanolett.9b00180[25] Wan C, Xiao K, Angelin A, et al. The rise of bioinspired ionotronics. Adv Intell Syst, 2019, 1(7), 1900073 doi: 10.1002/aisy.201900073[26] Kim S, Yoon J, Kim H D. Carbon nanotube synaptic transistor network for pattern recognition. ACS Appl Mater Interfaces, 2015, 7, 45, 25479 doi: 10.1021/acsami.5b08541[27] Bisri S Z, Shimizu S, Nakano M, et al. Endeavor of iontronics: From fundamentals to applications of ion-controlled electronics. Adv Mater, 2017, 29(25), 1607054 doi: 10.1002/adma.201607054[28] Yuan H, Shimotani H, Tsukazaki A, et al. Hydrogenation-induced surface polarity recognition and proton memory behavior at protic-ionic-liquid/oxide electric-double-layer interfaces. J Am Chem Soc, 2010, 132, 6672 doi: 10.1021/ja909110s[29] Yang J T, Ge C, Du J Y, et al. Artificial synapses emulated by an electrolyte-gated tungsten-oxide transistor. Adv Mater, 2018, 30(34), 1801548 doi: 10.1002/adma.201801548[30] Sharbati M T, Du Y, Torres J, et al. Low-power, electrochemically tunable graphene synapses for neuromorphic computing. Adv Mater, 2018, 30(36), 1802353 doi: 10.1002/adma.201802353[31] Ge C, Liu C, Zhou Q, et al. A ferrite synaptic transistor with topotactic transformation. Adv Mater, 2019, 31(19), 1900379 doi: 10.1002/adma.201900379[32] Huang H Y, Ge C, Zhang Q H, et al. Electrolyte-gated synaptic transistor with oxygen ions. Adv Funct Mater, 2019, 29(29), 1902702 doi: 10.1002/adfm.201902702[33] Ge C, Li G, Zhou Q, et al. Gating-induced reversible H xVO2 phase transformations for neuromorphic computing. Nano Energy, 2020, 67, 104268 doi: 10.1016/j.nanoen.2019.104268[34] Ling H, Koutsouras D A, Kazemzadeh S. Electrolyte-gated transistors for synaptic electronics, neuromorphic computing, and adaptable biointerfacing. Appl Phys Rev, 2020, 7(1), 011307 doi: 10.1063/1.5122249[35] Kim K, Chen C L, Truong Q. A carbon nanotube synapse with dynamic logic and learning. Adv Mater, 2013, 25, 1693 doi: 10.1002/adma.201203116[36] Feng P, Xu W, Yang Y, et al. Printed neuromorphic devices based on printed carbon nanotube thin-film transistors. Adv Funct Mater, 2017, 27(5), 1604447 doi: 10.1002/adfm.201604447[37] Yao Y, Huang X, Peng S, et al. Reconfigurable artificial synapses between excitatory and inhibitory modes based on single-gate graphene transistors. Adv Electron Mater, 2019, 5(5), 1902702 doi: 10.1002/aelm.201800887[38] Jiang J, Guo J, Wan X, et al. 2D MoS2 neuromorphic devices for brain-like computational systems. Small, 2017, 13(29), 1700933 doi: 10.1002/smll.201700933[39] Dai S, Wang Y, Zhang J, et al. Wood-derived nanopaper dielectrics for organic synaptic transistors. ACS Appl Mater Interfaces, 2018, 10(46), 39983 doi: 10.1021/acsami.8b15063[40] Xu W, Min S Y, Hwang H. Organic core-sheath nanowire artificial synapses with femtojoule energy consumption. Sci Adv, 2016, 2, e1501326 doi: 10.1126/sciadv.1501326[41] Pal B N, Dhar B M, See K C, et al. Solution-deposited sodium beta-alumina gate dielectrics for low-voltage and transparent field-effect transistors. Nat Mater, 2009, 8(11), 898 doi: 10.1038/nmat2560[42] Lee S W, Lee H J, Choi J H, et al. Periodic array of polyelectrolyte-gated organic transistors from electrospun poly(3-hexylthiophene) nanofibers. Nano Lett, 2010, 10(1), 347 doi: 10.1021/nl903722z[43] Herlogsson L, Noh Y Y, Zhao N, et al. Downscaling of organic field-effect transistors with a polyelectrolyte gate insulator. Adv Mater, 2008, 20(24), 4708 doi: 10.1002/adma.200801756[44] Siddons G P, Merchin D, Back J H, et al. Highly efficient gating and doping of carbon nanotubes with polymer electrolytes. Nano Lett, 2004, 4, 927 doi: 10.1021/nl049612y[45] Said E, Crispin X, Herlogsson L, et al. Polymer field-effect transistor gated via a poly(styrenesulfonic acid) thin film. Appl Phys Lett, 2006, 89(14), 143507 doi: 10.1063/1.2358315[46] Ofer D, Crooks R M, Wrighto M S. Potential dependence of the conductivity of highly oxidized polythiophenes, polypyrroles, and polyaniline: finite windows of high conductivity. J Am Chem Soc, 1990, 112, 7869 doi: 10.1021/ja00178a004[47] Zakeeruddin S M, Grätzel M. Solvent-free ionic liquid electrolytes for mesoscopic dye-sensitized solar cells. Adv Funct Mater, 2009, 19, 2187 doi: 10.1002/adfm.200900390[48] Lu W, Fadeev A G, Qi B, et al. Use of ionic liquids for π-conjugated polymer electrochemical devices. Science, 2002, 297, 983 doi: 10.1126/science.1072651[49] Mohmeyer N, Kuang D, Wang P, et al. An efficient organogelator for ionic liquids to prepare stable quasi-solid-state dye-sensitized solar cells. J Mater Chem, 2006, 16(29), 2978 doi: 10.1039/B604021G[50] Lodge T P. Materials science. A unique platform for materials design. Science, 2008, 321(5885), 50 doi: 10.1126/science.1159652[51] Cho J H, Lee J, Lodge T P, et al. Printable ion-gel gate dielectrics for low-voltage polymer thin-film transistors on plastic. Nat Mater, 2008, 7, 2291 doi: 10.1038/nmat2291[52] Susan M A B H, Kaneko T, Noda A, et al. Ion gels prepared by in situ radical polymerization of vinyl monomers in an ionic liquid and their characterization as polymer electrolytes. J Am Chem Soc, 2005, 127, 4976 doi: 10.1021/ja045155b[53] He Y, Boswell P G, Buhlmann P, et al. Ion gels by self-assembly of a triblock copolymer in an ionic liquid. J Phys Chem B, 2007, 111, 4645 doi: 10.1021/jp064574n[54] Lee J, Panzer M J, He Y, et al. Ion gel gated polymer thin-film transistors. J Am Chem Soc, 2007, 129, 4532 doi: 10.1021/ja070875e[55] Chen F, Qing Q, Xia J, et al. Electrochemical gate-controlled charge transport in graphene in ionic liquid and aqueous solution. J Am Chem Soc, 2009, 131, 9908 doi: 10.1021/ja9041862[56] Yuan H, Shimotani H, Tsukazaki A, et al. High-density carrier accumulation in zno field-effect transistors gated by electric double layers of ionic liquids. Adv Funct Mater, 2009, 19(7), 1046 doi: 10.1002/adfm.200801633[57] Lai Q, Zhang L, Li Z, et al. Ionic/electronic hybrid materials integrated in a synaptic transistor with signal processing and learning functions. Adv Mater, 2010, 22(22), 2448 doi: 10.1002/adma.201000282[58] Zhang B, Liu Y, Agarwal S, et al. Structure, sodium ion role, and practical issues for β-alumina as a high-k solution-processed gate layer for transparent and low-voltage electronics. ACS Appl Mater Interfaces, 2011, 3, 4254 doi: 10.1021/am2009103[59] Edvardsson S, Ojamae L, Thomas J. A study of vibrational modes in Na+ beta -alumina by molecular dynamics simulation. J Phys: Condens Matter, 1994, 6, 1319 doi: 10.1088/0953-8984/6/7/005[60] Meyer W H. Polymer electrolytes for lithium-ion. Adv Mater, 1998, 10, 6 doi: 10.1007/978-3-319-03751-6[61] Ge C, Jin K J, Gu L. Metal–insulator transition induced by oxygen vacancies from electrochemical reaction in ionic liquid-gated manganite films. Adv Mater Interfaces, 2015, 2(17), 1500407 doi: 10.1002/admi.201500407[62] Jin K J, Lu H B, Zhou Q L, et al. Positive colossal magnetoresistance from interface effect in p−n junction of La0.9Sr0.1MnO3 and SrNb0.01Ti0.99O3. Phys Revi B, 2005, 71(18), 184428 doi: 10.1103/PhysRevB.71.184428[63] Jin K J, Lu H B, Zhao K. Novel multifunctional properties induced by interface effects in perovskite oxide heterostructures. Adv Mater, 2009, 21, 4636 doi: 10.1002/adma.200901046[64] Yang Y, Wen J, Guo L, et al. Long-term synaptic plasticity emulated in modified graphene oxide electrolyte gated IZO-based thin-film transistors. ACS Appl Mater Interfaces, 2016, 8(44), 30281 doi: 10.1021/acsami.6b08515[65] Wang J, Li Y, Yin C, et al. Long-term depression mimicked in an IGZO-based synaptic transistor. IEEE Electron Device Letters, 2017, 38(2), 191 doi: 10.1109/LED.2016.2639539[66] Guo L, Wan Q, Wan C, et al. Short-term memory to long-term memory transition mimicked in IZO homojunction synaptic transistors. IEEE Electron Device Lett, 2013, 34(12), 1581 doi: 10.1109/LED.2013.2286074[67] Wan C J, Zhu L Q, Zhou J M. Inorganic proton conducting electrolyte coupled oxide-based dendritic transistors for synaptic electronics. Nanoscale, 2014, 6, 4491 doi: 10.1039/C3NR05882D[68] Wu G, Zhang J, Wan X. Chitosan-based biopolysaccharide proton conductors for synaptic transistors on paper substrates. J Mater Chem C, 2014, 2, 6249 doi: 10.1039/c4tc00652f[69] Wan C J, Zhu L Q, Liu Y H. Proton-conducting graphene oxide-coupled neuron transistors for brain-inspired cognitive systems. Adv Mater, 2016, 28, 3557 doi: 10.1002/adma.201505898[70] Sarkar D, Tao J, Wang W, et al. Mimicking biological synaptic functionality with an indium phosphide synaptic device on silicon for scalable neuromorphic computing. ACS Nano, 2018, 12(2), 1656 doi: 10.1021/acsnano.7b08272[71] John R A, Yantara N, Ng Y F. Ionotronic halide perovskite drift-diffusive synapses for low-power neuromorphic computation. Adv Mater, 2018, 30(51), 1805454 doi: 10.1002/adma.201805454[72] Ling H, Wang N, Yang A, et al. Dynamically reconfigurable short-term synapse with millivolt stimulus resolution based on organic electrochemical transistors. Adv Mater Technol, 2019, 4(9), 1900471 doi: 10.1002/admt.201900471[73] Wu G, Feng P, Wan X, et al. Artificial synaptic devices based on natural chicken albumen coupled electric-double-layer transistors. Sci Rep, 2016, 6(1), 1 doi: 10.1038/srep23578[74] Wang M, Shen S, Ni J. Electric-field-controlled phase transformation in WO3 thin films through hydrogen evolution. Adv Mater, 2017, 29, 1703628 doi: 10.1002/adma.201703628[75] He Y, Nie S, Liu R, et al. Spatiotemporal information processing emulated by multiterminal neuro-transistor networks. Adv Mater, 2019, 31(21), 1900903 doi: 10.1002/adma.201900903[76] John R A, Liu F, Chien N A. Synergistic gating of electro-iono-photoactive 2D chalcogenide neuristors: Coexistence of hebbian and homeostatic synaptic metaplasticity. Adv Mater, 2018, 30(25), 1800220 doi: 10.1002/adma.201800220[77] Du J Y, Ge C, Riahi H. Dual-gated MoS2 transistors for synaptic and programmable logic functions. Adv Electron Mater, 2020, 6(5), 1901408 doi: 10.1002/aelm.201901408[78] Bao L, Zhu J, Yu Z. Dual-gated MoS2 neuristor for neuromorphic computing. ACS Appl Mater Interfaces, 2019, 11(44), 41482 doi: 10.1021/acsami.9b10072[79] Zhu J, Yang Y, Jia R. Ion gated synaptic transistors based on 2D van der Waals crystals with tunable diffusive dynamics. Adv Mater, 2018, 30(21), 1800195 doi: 10.1002/adma.201800195[80] Xie D, Jiang J, Hu W. Coplanar multigate MoS2 electric-double-layer transistors for neuromorphic visual recognition. ACS Appl Mater Interfaces, 2018, 10(31), 25943 doi: 10.1021/acsami.8b07234[81] Jiang J, Hu W, Xie D, et al. 2D electric-double-layer phototransistor for photoelectronic and spatiotemporal hybrid neuromorphic integration. Nanoscale, 2019, 11, 1360 doi: 10.1039/C8NR07133K[82] Tian H, Mi W, Xie Q Y. Graphene dynamic synapse with modulatable plasticity. Nano Lett, 2015, 15(12), 8013 doi: 10.1021/acs.nanolett.5b03283[83] van de Burgt Y, Melianas A, Keene S T, et al. Organic electronics for neuromorphic computing. Nat Electron, 2018, 1(7), 386 doi: 10.1038/s41928-018-0103-3[84] Smerieri A, Berzina T, Erokhin V, et al. Polymeric electrochemical element for adaptive networks: Pulse mode. J Appl Phys, 2008, 104(11), 114513 doi: 10.1063/1.3033399[85] Nawrocki R A, Voyles R M, Shaheen S E. Neurons in polymer: hardware neural units based on polymer memristive devices and polymer transistors. IEEE Trans Electron Devices, 2014, 61(10), 3513 doi: 10.1109/TED.2014.2346700[86] Battistoni S, Erokhin V, Iannotta S. Frequency driven organic memristive devices for neuromorphic short term and long term plasticity. Org Electron, 2019, 65, 434 doi: 10.1016/j.orgel.2018.11.033[87] Bichler O, Zhao W, Alibart F. Pavlov’s dog associative learning demonstrated on synaptic-like organic transistors. Neur Comput, 2013, 25, 549 doi: 10.1162/NECO_a_00377[88] Qian C, Kong L A, Yang J, et al. Multi-gate organic neuron transistors for spatiotemporal information processing. Appl Phys Lett, 2017, 110(8), 083302 doi: 10.1063/1.4977069[89] Khodagholy D, Gelinas J N, Thesen T, et al. NeuroGrid: recording action potentials from the surface of the brain. Nat Neurosci, 2014, 18(2), 310 doi: 10.1038/nn.3905[90] Gkoupidenis P, Koutsouras D A, Lonjaret T, et al. Orientation selectivity in a multi-gated organic electrochemical transistor. Sci Rep, 2016, 6, 27007 doi: 10.1038/srep27007[91] Dai S, Chu Y, Liu D, et al. Intrinsically ionic conductive cellulose nanopapers applied as all solid dielectrics for low voltage organic transistors. Nat Commun, 2018, 9(1), 1 doi: 10.1038/s41467-017-02088-w[92] Lee Y, Oh J Y, Xu W. Stretchable organic optoelectronic sensorimotor synapse. Sci Adv, 2018, 4, 7387 doi: 10.1126/sciadv.aat7387[93] Lapkin D A, Emelyanov A V, Demin V A. Spike-timing-dependent plasticity of polyaniline-based memristive element. Microelectron Eng, 2018, 185/186, 43 doi: 10.1016/j.mee.2017.10.017[94] Gkoupidenis P, Schaefer N, Garlan B. Neuromorphic functions in PEDOT:PSS organic electrochemical transistors. Adv Mater, 2015, 27, 7176 doi: 10.1002/adma.201503674[95] Kim Y, Chortos A, Xu W. A bioinspired flexible organic artificial afferent nerve. Science, 2018, 360, 998 doi: 10.1126/science.aao0098[96] Tybrandt K, Forchheimer R, Berggren M. Logic gates based on ion transistors. Nat Commun, 2012, 3(1), 1 doi: 10.1038/ncomms1869[97] Gkoupidenis P, Schaefer N, Strakosas X, et al. Synaptic plasticity functions in an organic electrochemical transistor. Appl Phys Lett, 2015, 107(26), 263302 doi: 10.1063/1.4938553[98] Gkoupidenis P, Rezaei-Mazinani S, Proctor C M, et al. Orientation selectivity with organic photodetectors and an organic electrochemical transistor. AIP Adv, 2016, 6(11), 111307 doi: 10.1063/1.4967947[99] Gkoupidenis P, Koutsouras D A, Malliaras G G. Neuromorphic device architectures with global connectivity through electrolyte gating. Nat Commun, 2017, 8(1), 1 doi: 10.1038/s41467-016-0009-6[100] van de Burgt Y, Lubberman E, Fuller E J, et al. A non-volatile organic electrochemical device as a low-voltage artificial synapse for neuromorphic computing. Nat Mater, 2017, 16(4), 414 doi: 10.1038/nmat4856[101] Desbief S, di Lauro M, Casalini S, et al. Electrolyte-gated organic synapse transistor interfaced with neurons. Org Electron, 2016, 38, 21 doi: 10.1016/j.orgel.2016.07.028[102] Qian C, Sun J, Kong L A. Artificial synapses based on in-plane gate organic electrochemical transistors. ACS Appl Mater Interfaces, 2016, 8, 26169 doi: 10.1021/acsami.6b08866[103] Wang H, Zhao Q, Ni Z, et al. A Ferroelectric/electrochemical modulated organic synapse for ultraflexible, artificial visual-perception system. Adv Mater, 2018, 30, 1803961 doi: 10.1002/adma.201803961 -

Proportional views

DownLoad:

DownLoad: