| Citation: |

Zhuoyu Chen, Pingcheng Dong, Zhiyong Lai, Wenyue Zhang, Xianglong Wang, Lei Chen, Fengwei An. A cascadable stereo matching processor with pixel-wise fusion for extended depth sensing[J]. Journal of Semiconductors, 2026, In Press. doi: 10.1088/1674-4926/25120024

****

Z Y Chen, P C Dong, Z Y Lai, W Y Zhang, X L Wang, L Chen, and F W An, A cascadable stereo matching processor with pixel-wise fusion for extended depth sensing[J]. J. Semicond., 2026, 47(6): 062203 doi: 10.1088/1674-4926/25120024

|

A cascadable stereo matching processor with pixel-wise fusion for extended depth sensing

DOI: 10.1088/1674-4926/25120024

CSTR: 32376.14.1674-4926.25120024

More Information-

Abstract

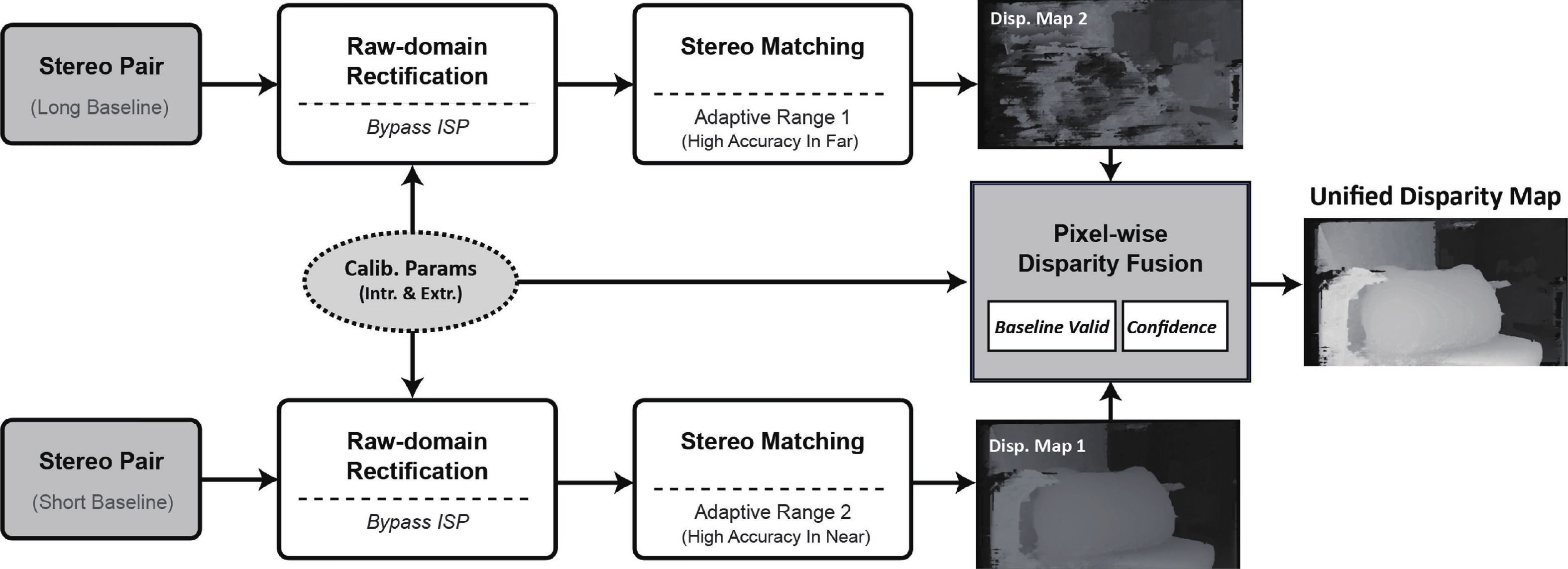

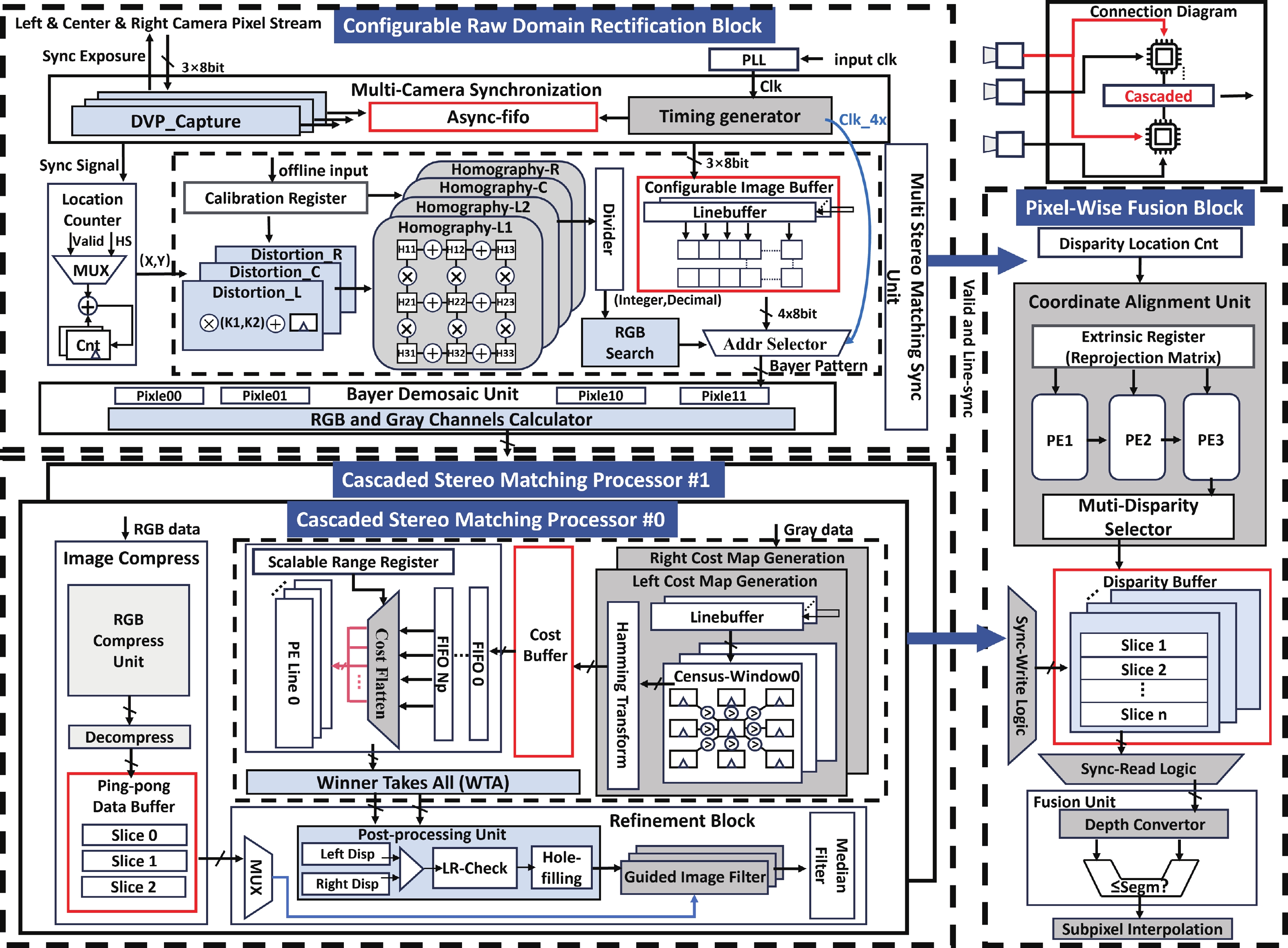

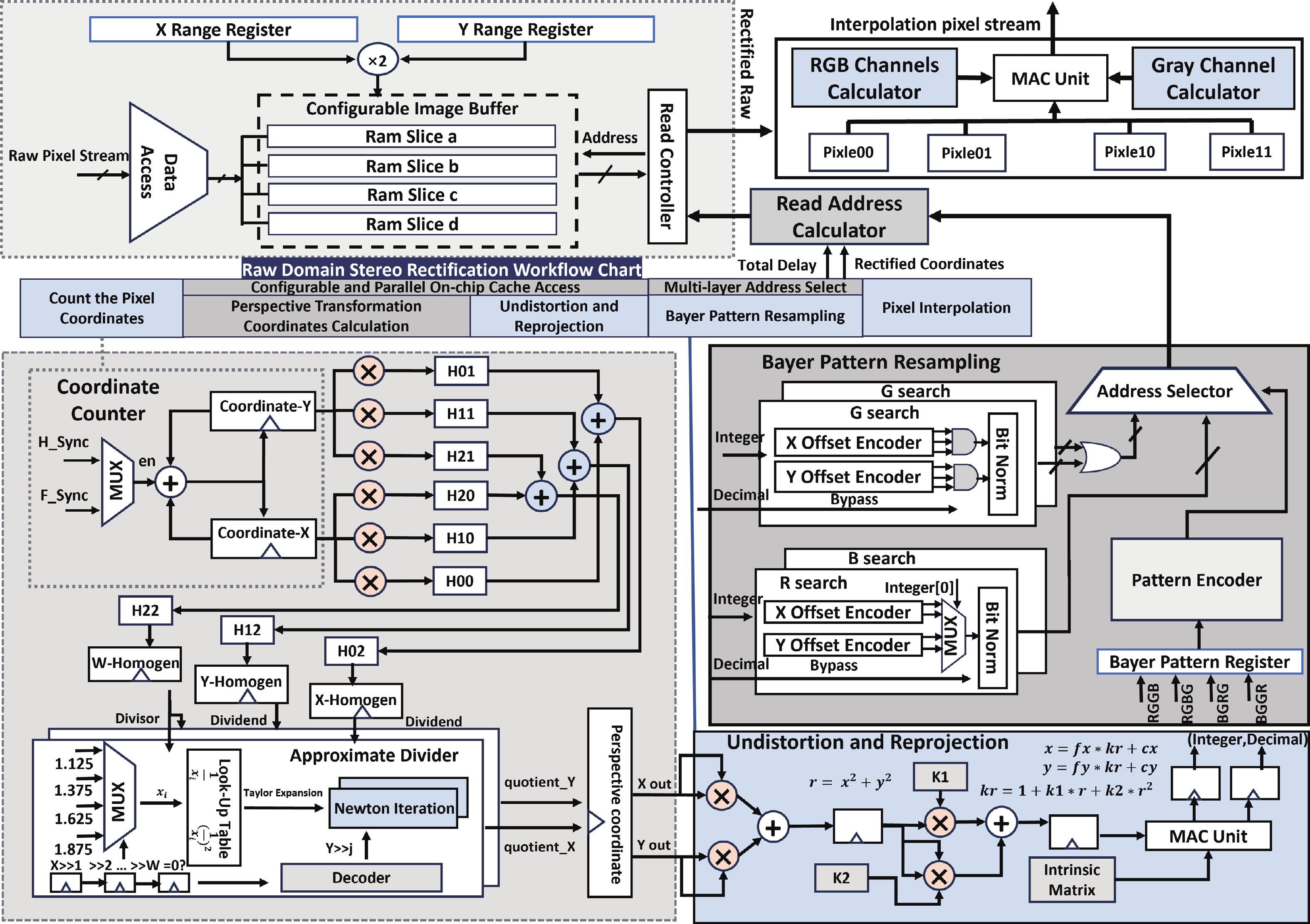

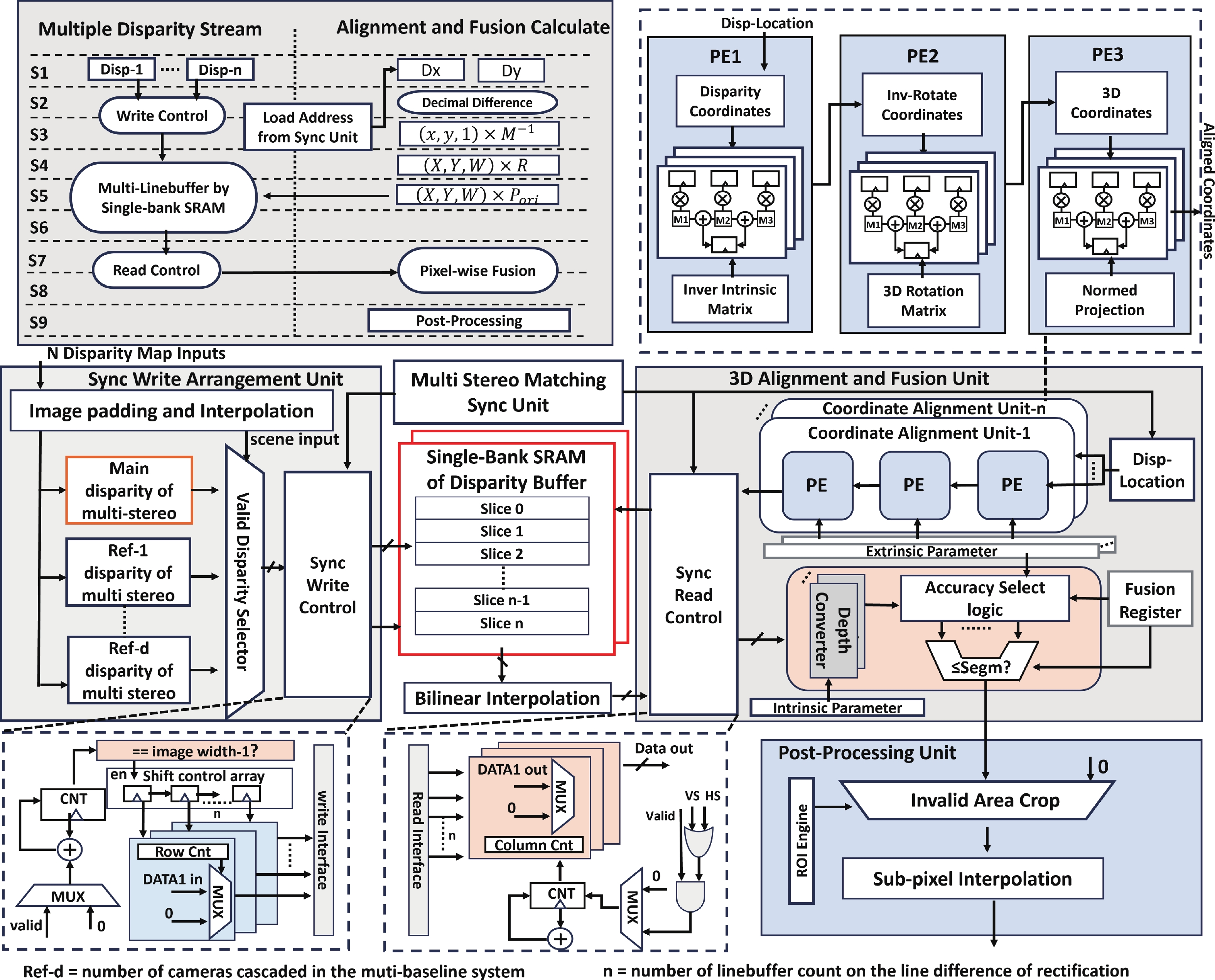

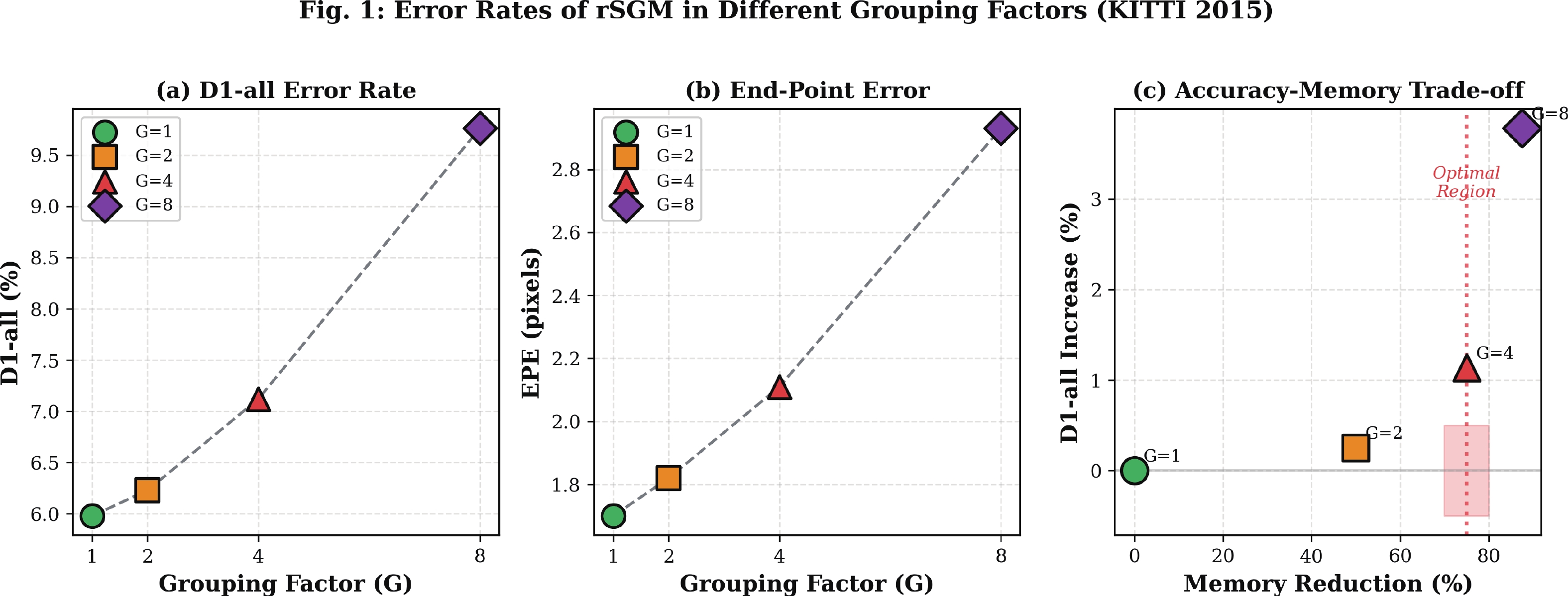

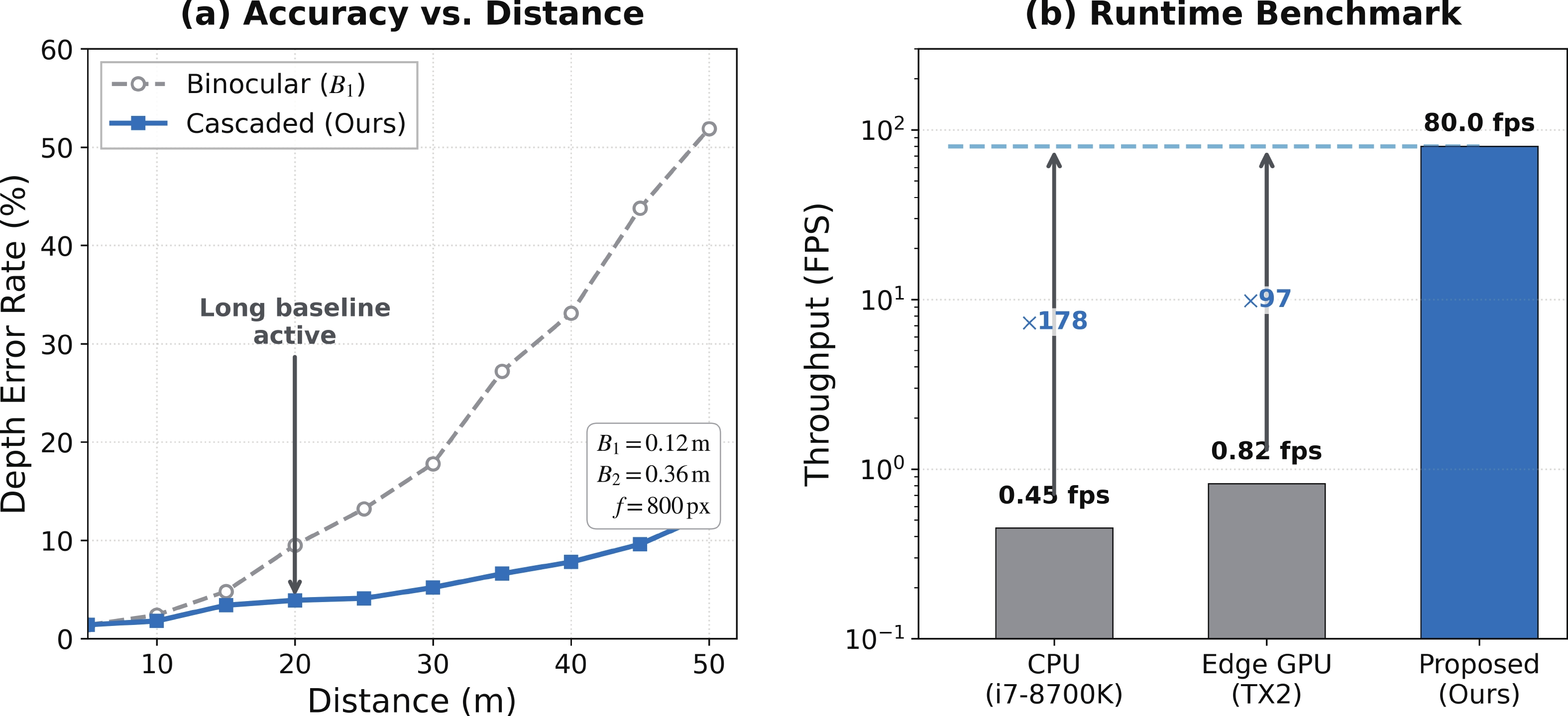

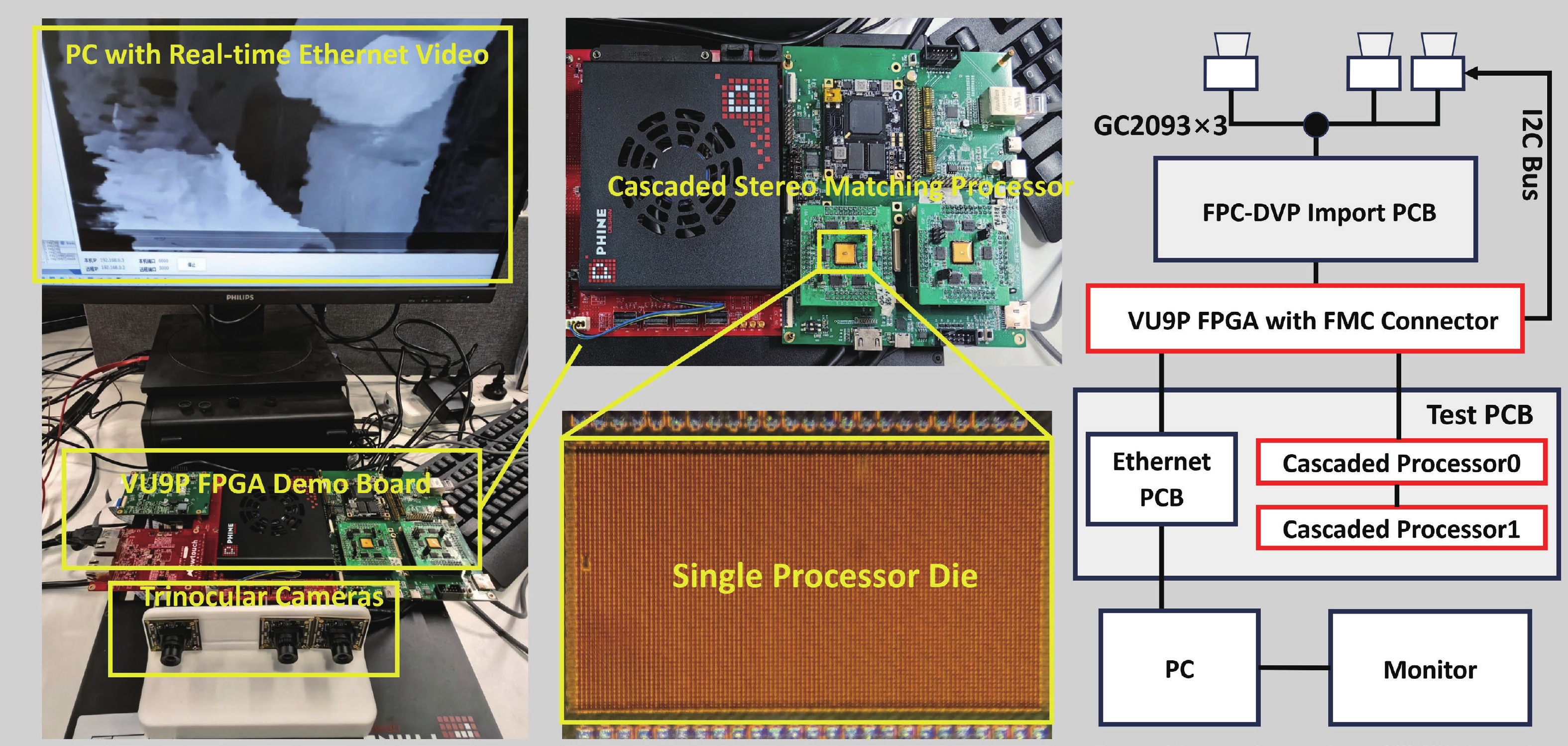

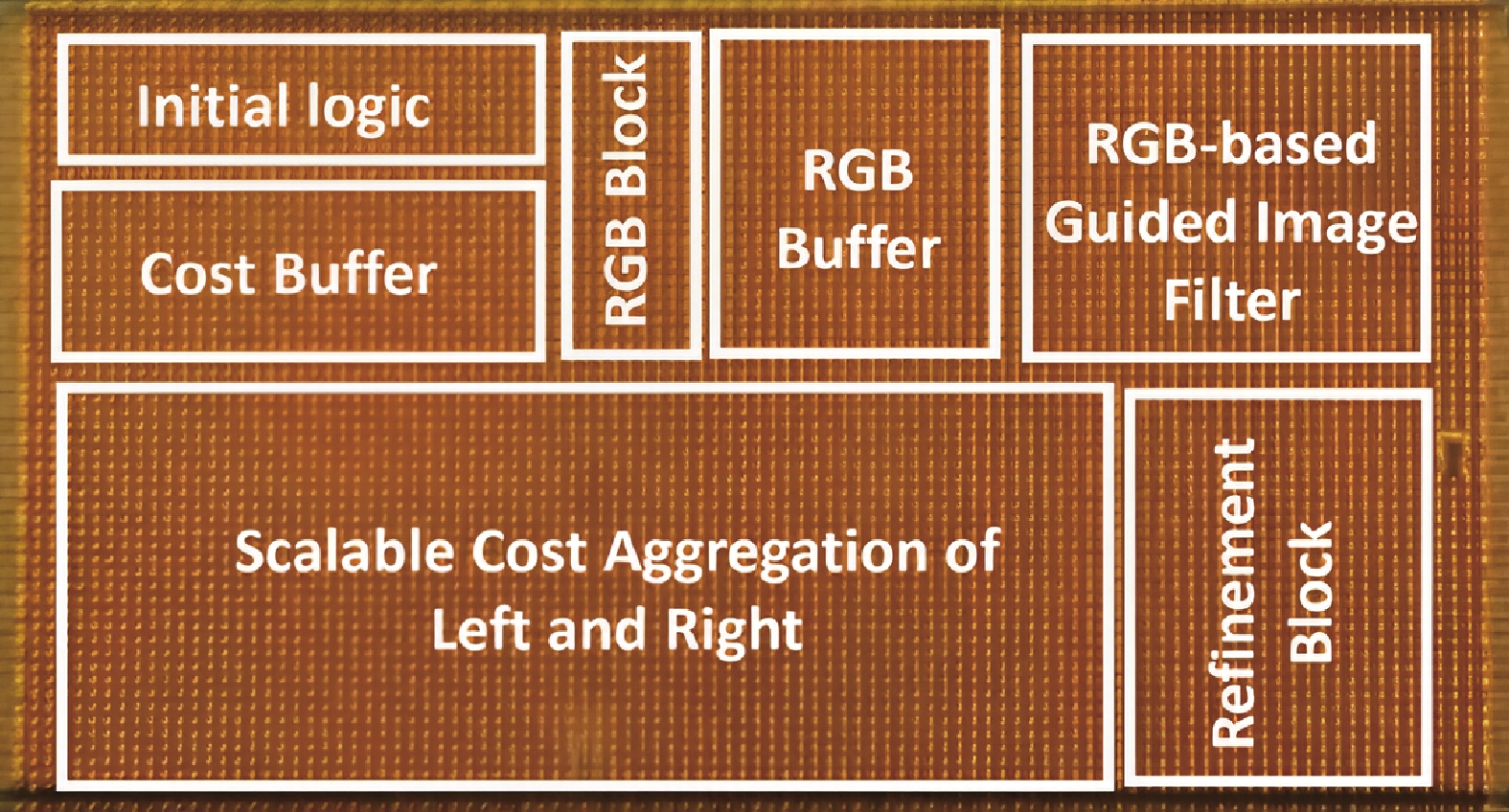

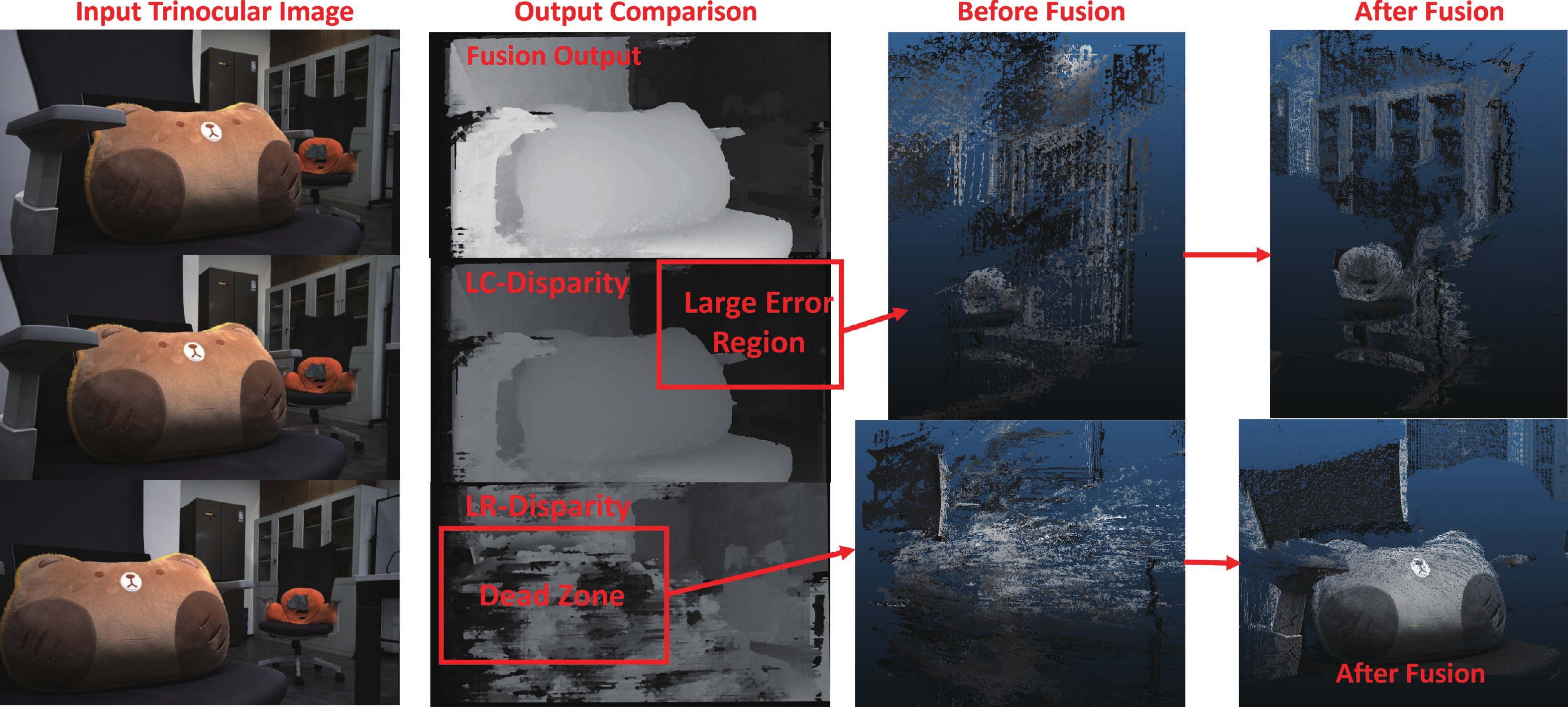

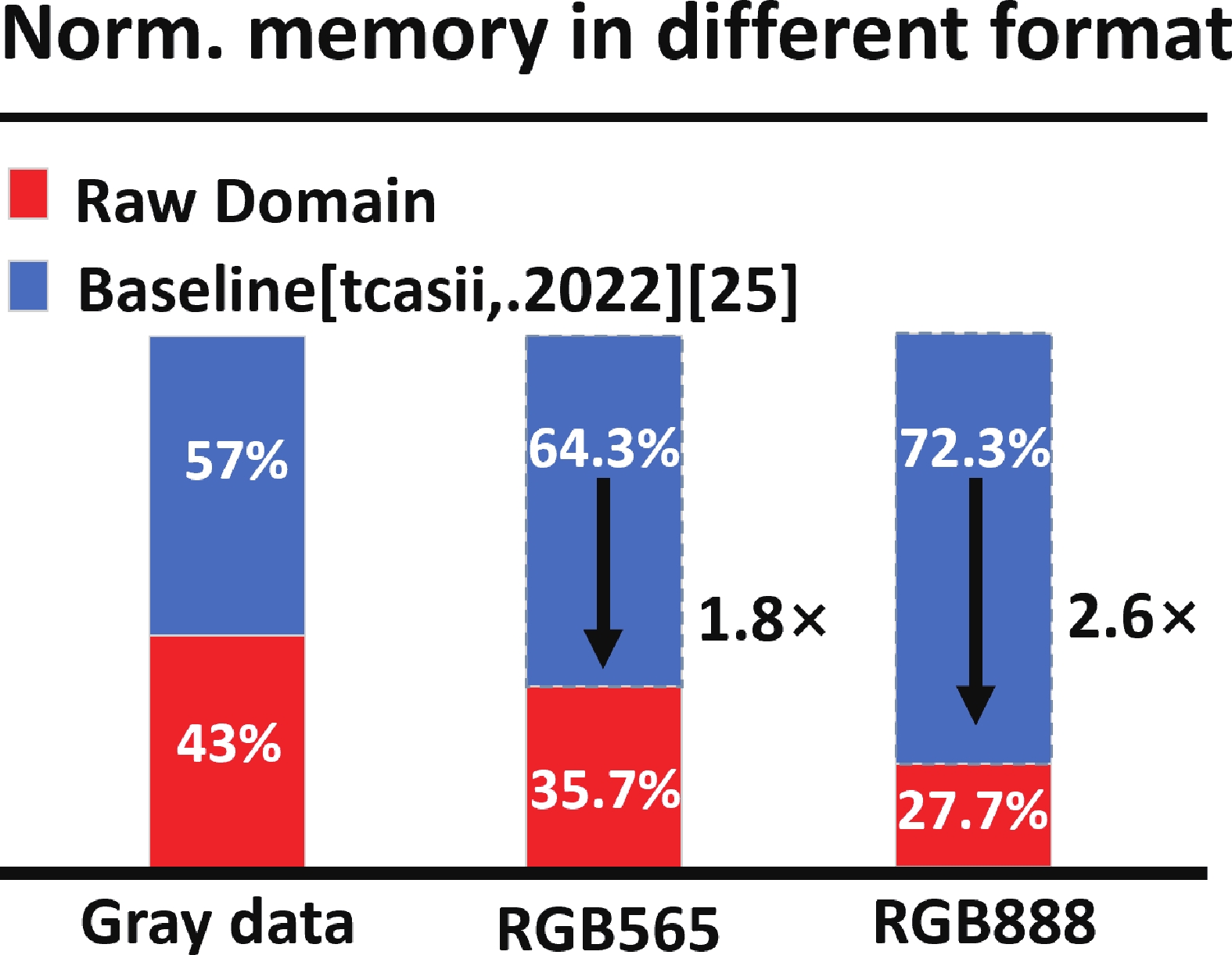

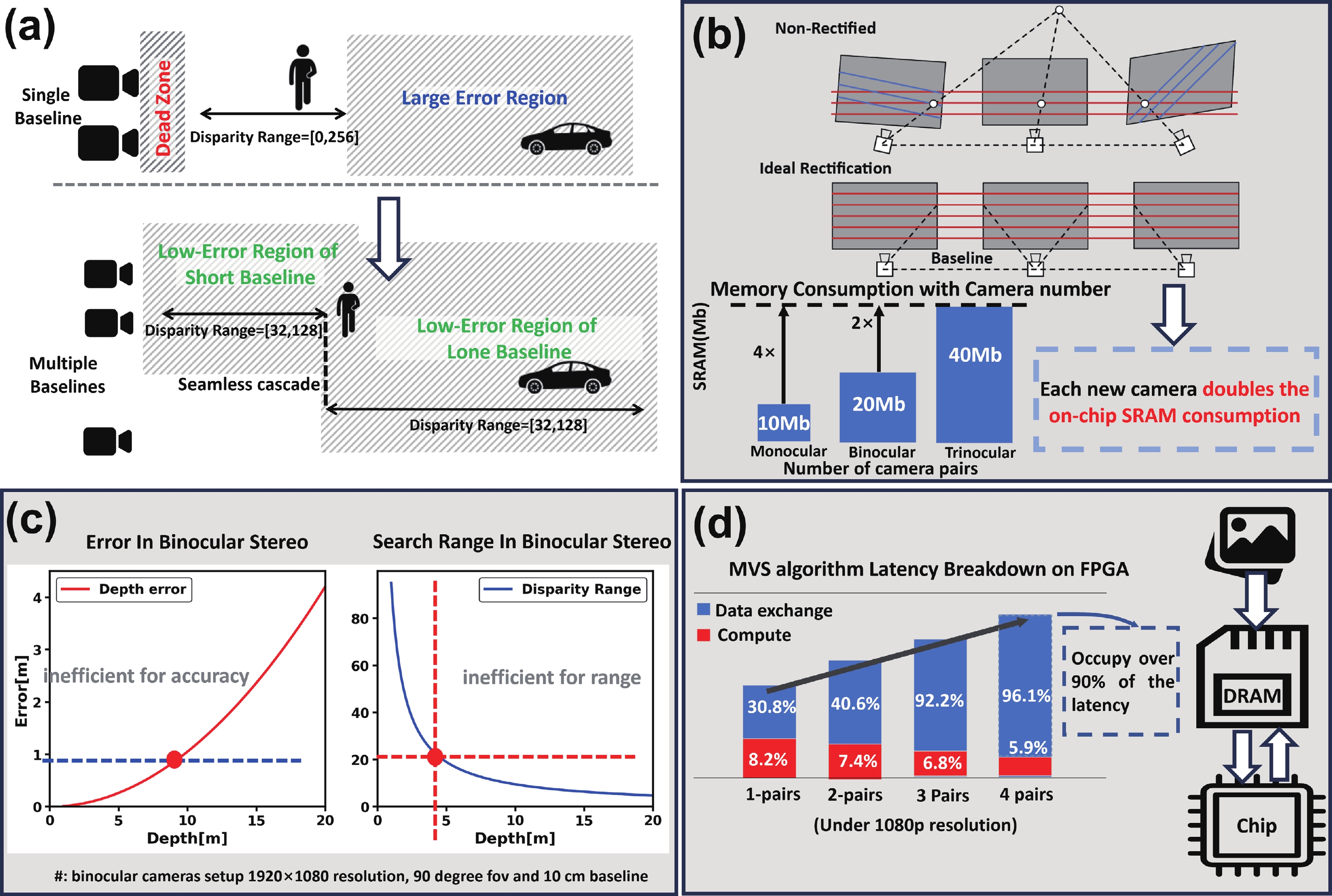

Achieving long-range, high-accuracy depth perception under stringent power constraints remains a critical challenge for stereo vision in edge applications. This work presents a cascadable stereo matching processor that overcomes the inherent trade-off between sensing range and computational efficiency. The core innovation is a scalable semi-global matching (SSGM) algorithm which dynamically optimizes the disparity search range for different baselines, ensuring constant on-chip memory usage and a significant reduction in data movement. The architecture further integrates a raw-domain rectification front-end, which performs direct geometric transformation on Bayer-patterned image streams. This approach eliminates the need for external memory access by bypassing conventional ISP pipelines, thereby maximizing throughput and reducing system memory consumption. Parallel processing paths for multiple baselines converge in a pixel-wise fusion module, which synthesizes a unified depth map by selecting the most reliable disparity estimate for each output pixel. The cascadable stereo matching processor achieves speedups of up to 178x and 97x over CPU and EdgeGPU platforms, respectively, in multi-baseline stereo disparity fusion. Implemented in 40-nm CMOS technology, the processor operates at 160 MHz, achieving a processing speed of 80 frames per second with an energy efficiency of 7.9 pJ/pixel and occupying a core area of 6.04 mm2. -

References

[1] Wang Y, Chao W L, Garg D, et al. Pseudo-LiDAR from visual depth estimation: Bridging the gap in 3D object detection for autonomous driving. 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2019: 8437 doi: 10.1109/CVPR.2019.00864[2] Menze M, Geiger A. Object scene flow for autonomous vehicles. 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2015: 3061 doi: 10.1109/CVPR.2015.7298925[3] Wang H, Sridhar S, Huang J W, et al. Normalized object coordinate space for category-level 6D object pose and size estimation. 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2019: 2637 doi: 10.1109/CVPR.2019.00275[4] Sheikh T S, Afanasyev I M. Stereo vision-based optimal path planning with stochastic maps for mobile robot navigation. Intelligent Autonomous Systems 15. Cham: Springer International Publishing, 2019: 575 doi: 10.1007/978-3-030-01370-7_4[5] Rasla A, Beyeler M. The relative importance of depth cues and semantic edges for indoor mobility using simulated prosthetic vision in immersive virtual reality. Proceedings of the 28th ACM Symposium on Virtual Reality Software and Technology, 2022: 1 doi: 10.1145/3562939.3565620[6] Tippetts B, Lee D J, Lillywhite K, et al. Review of stereo vision algorithms and their suitability for resource-limited systems. J Real Time Image Process, 2016, 11(1): 5 doi: 10.1007/s11554-012-0313-2[7] Hirschmüller H. Accurate and efficient stereo processing by semi-global matching and mutual information. 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR'05), 2005: 807 doi: 10.1109/CVPR.2005.56[8] Xu G W, Wang X Q, Ding X H, et al. Iterative geometry encoding volume for stereo matching. 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2023: 21919 doi: 10.1109/CVPR52729.2023.02099[9] Shamsafar F, Woerz S, Rahim R, et al. MobileStereoNet: Towards lightweight deep networks for stereo matching. 2022 IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), 2022: 677 doi: 10.1109/WACV51458.2022.00075[10] Zhang Y, Chen G, He T, et al. ViraEye: An energy-efficient stereo vision accelerator with binary neural network in 55 nm CMOS. Proceedings of the 28th Asia and South Pacific Design Automation Conference, 2023: 178 doi: 10.1145/3566097.3567940[11] Min F, Xu H B, Wang Y, et al. Dadu-eye: A 5.3 TOPS/W, 30 fps/1080p high accuracy stereo vision accelerator. IEEE Trans Circuits Syst I, 2021, 68(10): 4207 doi: 10.1109/tcsi.2021.3101296[12] Choi S, Lee J, Lee K, et al. A 9.02mW CNN-stereo-based real-time 3D hand-gesture recognition processor for smart mobile devices. 2018 IEEE International Solid-State Circuits Conference (ISSCC), 2018: 220 doi: 10.1109/ISSCC.2018.8310263[13] Wang H Y, Zhou W, Zhang X Y, et al. A 39pJ/label 1920×1080 165.7 FPS block PatchMatch based stereo matching processor on FPGA. 2022 IEEE Custom Integrated Circuits Conference (CICC), 2022: 1 doi: 10.1109/CICC53496.2022.9772830[14] Jiang Y C, Yu Y, Li H, et al. A configurable local-expansion-move accelerator for accurate stereo matching. IEEE Trans Circuits Syst II, 2024, 71(7): 3518 doi: 10.1109/tcsii.2024.3364491[15] Gallup D, Frahm J M, Mordohai P, et al. Variable baseline/resolution stereo. 2008 IEEE Conference on Computer Vision and Pattern Recognition, 2008: 1 doi: 10.1109/CVPR.2008.4587671[16] Dong P C, Chen Z Y, Li Z A, et al. A 4.29nJ/pixel stereo depth coprocessor with pixel level pipeline and region optimized semi-global matching for IoT application. IEEE Trans Circuits Syst I Regul Pap, 2022, 69(1): 334 doi: 10.1109/TCSI.2021.3100071[17] Ling Y H, He T, Zhang Y, et al. Lite-stereo: A resource-efficient hardware accelerator for real-time high-quality stereo estimation using binary neural network. IEEE Trans Comput Aided Des Integr Circuits Syst, 2022, 41(12): 5357 doi: 10.1109/TCAD.2022.3163629[18] Li Z Y, Wang J C, Sylvester D, et al. A 1920 × 1080 25-frames/s 2.4-TOPS/W low-power 6-D vision processor for unified optical flow and stereo depth with semi-global matching. IEEE J Solid State Circuits, 2019, 54(4): 1048 doi: 10.1109/JSSC.2018.2885559[19] Li J, Zhang C L, Yang W X, et al. FPGA-based low-bit and lightweight fast light field depth estimation. IEEE Trans Very Large Scale Integr (VLSI) Syst, 2025, 33(1): 88 doi: 10.1109/TVLSI.2024.3496751[20] Junger C, Fütterer R, Rosenberger M, et al. FPGA-based multi-view stereo system with flexible measurement setup. Meas Sens, 2022, 24: 100425 doi: 10.1016/j.measen.2022.100425[21] Sato T, Yokoya N. Efficient hundreds-baseline stereo by counting interest points for moving omni-directional multi-camera system. J Vis Commun Image Represent, 2010, 21(5/6): 416 doi: 10.1016/j.jvcir.2010.02.006[22] Lu Z M, Wang J, Li Z W, et al. A resource-efficient pipelined architecture for real-time semi-global stereo matching. IEEE Trans Circuits Syst Video Technol, 2022, 32(2): 660 doi: 10.1109/TCSVT.2021.3061704[23] Dong P C, Chen Z Y, Li K, et al. A 1920 × 1080 129fps 4.3pJ/pixel stereo-matching processor for pico aerial vehicles. ESSCIRC 2023- IEEE 49th European Solid State Circuits Conference (ESSCIRC), 2023: 345. doi: 10.1109/ESSCIRC59616.2023.10268790[24] Dosovitskiy A, Ros G, Codevilla F, et al. CARLA: An open urban driving simulator. Proceedings of the 1st Annual Conference on Robot Learning, 2017: 1[25] Dong P C, Chen Z Y, Li Z A, et al. Configurable image rectification and disparity refinement for stereo vision. IEEE Trans Circuits Syst II, 2022, 69(10): 3973 doi: 10.1109/TCSII.2022.3191811[26] Hübert H, Stabernack B, Zilly F. Architecture of a low latency image rectification engine for stereoscopic 3-D HDTV processing. IEEE Trans Circuits Syst Video Technol, 2013, 23(5): 813 doi: 10.1109/TCSVT.2012.2223795[27] Akin A, Baz I, Gaemperle L M, et al. Compressed look-up-table based real-time rectification hardware. 2013 IFIP/IEEE 21st International Conference on Very Large Scale Integration (VLSI-SoC), 2013: 272[28] Zhang W Y, Dong P C, Chen L, et al. Min-pooling cost aggregation for semi-global matching of stereo vision processor. IEEE Trans Circuits Syst II, 2025, 72(1): 258 doi: 10.1109/tcsii.2024.3463200[29] Geiger A, Lenz P, Urtasun R. Are we ready for autonomous driving? The KITTI vision benchmark suite. 2012 IEEE Conference on Computer Vision and Pattern Recognition, 2012: 3354 doi: 10.1109/CVPR.2012.6248074 -

Proportional views

Zhuoyu Chen received the bachelor’s degree from the Southern University of Science and Technology, China, in 2022, where he is currently pursuing the Ph.D. degree with the School of Microelectronics. His research primarily focuses on stereo vision processor, high-performance custom AI accelerators, and hardware-software co-design.

Zhuoyu Chen received the bachelor’s degree from the Southern University of Science and Technology, China, in 2022, where he is currently pursuing the Ph.D. degree with the School of Microelectronics. His research primarily focuses on stereo vision processor, high-performance custom AI accelerators, and hardware-software co-design. Pingcheng Dong (Graduate Student Member, IEEE) received the BEng degree from the Southern University of Science and Technology in 2022. He is currently pursuing the Ph.D. degree with The Hong Kong University of Science and Technology. His research interests include stereo matching accelerator, model compression, AI chip, and hardware−software co-design.

Pingcheng Dong (Graduate Student Member, IEEE) received the BEng degree from the Southern University of Science and Technology in 2022. He is currently pursuing the Ph.D. degree with The Hong Kong University of Science and Technology. His research interests include stereo matching accelerator, model compression, AI chip, and hardware−software co-design. Zhiyong Lai received the bachelor’s degree from the University of Science and Technology of China, in 2024. He is currently pursuing the master’s degree with the School of Microelectronics, Southern University of Science and Technology. His research primarily focuses on image processing algorithms, custom AI accelerators, and hardware-software co-design.

Zhiyong Lai received the bachelor’s degree from the University of Science and Technology of China, in 2024. He is currently pursuing the master’s degree with the School of Microelectronics, Southern University of Science and Technology. His research primarily focuses on image processing algorithms, custom AI accelerators, and hardware-software co-design. Wenyue Zhang received the bachelor’s degree from the Southern University of Science and Technology in 2022. She is currently pursuing the dual Ph.D. degree with the Southern University of Science and Technology and Pengcheng Laboratory. Her current research interests include computer vision and the design of AI accelerators.

Wenyue Zhang received the bachelor’s degree from the Southern University of Science and Technology in 2022. She is currently pursuing the dual Ph.D. degree with the Southern University of Science and Technology and Pengcheng Laboratory. Her current research interests include computer vision and the design of AI accelerators. Xianglong Wang received the bachelor’s degree from the Southern University of Science and Technology, China, in 2020, where he is currently pursuing the Ph.D. degree with the School of Microelectronics. His research interests include design of energy-efficient hardware algorithms for image processing.

Xianglong Wang received the bachelor’s degree from the Southern University of Science and Technology, China, in 2020, where he is currently pursuing the Ph.D. degree with the School of Microelectronics. His research interests include design of energy-efficient hardware algorithms for image processing. Lei Chen received the B.S. and M.S. degrees from Qingdao University of Science and Technology in 2006 and 2009, respectively, and the Ph.D. degree from Hiroshima University, Higashihiroshima, Japan, in 2012. Since October 2012, she has been doing her post-doctoral research with the HiSIM Research Center, Hiroshima University. From 2018 to 2020, she was with Sharp Company Ltd. Then, she was with the Peng Cheng Laboratory, Shenzhen, China, from 2020 to 2021. She is currently a Research Associate Professor with the Southern University of Science and Technology, Shenzhen. Her research interests include circuit design and hardware algorithm design for image processing and video compression.

Lei Chen received the B.S. and M.S. degrees from Qingdao University of Science and Technology in 2006 and 2009, respectively, and the Ph.D. degree from Hiroshima University, Higashihiroshima, Japan, in 2012. Since October 2012, she has been doing her post-doctoral research with the HiSIM Research Center, Hiroshima University. From 2018 to 2020, she was with Sharp Company Ltd. Then, she was with the Peng Cheng Laboratory, Shenzhen, China, from 2020 to 2021. She is currently a Research Associate Professor with the Southern University of Science and Technology, Shenzhen. Her research interests include circuit design and hardware algorithm design for image processing and video compression. Fengwei An (Member, IEEE) received the bachelor’s and master’s degrees from Qingdao University of Science and Technology, China, in 2006 and 2010, respectively, and the Ph.D. degree from Hiroshima University, Japan, in 2013. He is currently an Associate Professor with Shenzhen−Hong Kong Institute of Microelectronics, Southern University of Science and Technology (SUSTech). He has held significant positions, including an Associate Professor with Hiroshima University and a Chief Engineer with Panasonic Semiconductor Company Ltd., Japan. Since March 2019, he has been with SUSTech. His work has been published in top journals and conferences. He holds nine Chinese invention patents and three Japanese patents. His research interests include high-performance video image processing chips and AI/digital signal processing chips., Dr. An is a member of ACM. He was a recipient of the 2023 Outstanding Teaching Award from SUSTech, the APCCAS 2022 Best Paper Nomination Award, the PrimeAsia 2022 Bronze Leaf Award, and the Second Prize of the 2020 Wu Wenjun Artificial Intelligence Science and Technology Award.

Fengwei An (Member, IEEE) received the bachelor’s and master’s degrees from Qingdao University of Science and Technology, China, in 2006 and 2010, respectively, and the Ph.D. degree from Hiroshima University, Japan, in 2013. He is currently an Associate Professor with Shenzhen−Hong Kong Institute of Microelectronics, Southern University of Science and Technology (SUSTech). He has held significant positions, including an Associate Professor with Hiroshima University and a Chief Engineer with Panasonic Semiconductor Company Ltd., Japan. Since March 2019, he has been with SUSTech. His work has been published in top journals and conferences. He holds nine Chinese invention patents and three Japanese patents. His research interests include high-performance video image processing chips and AI/digital signal processing chips., Dr. An is a member of ACM. He was a recipient of the 2023 Outstanding Teaching Award from SUSTech, the APCCAS 2022 Best Paper Nomination Award, the PrimeAsia 2022 Bronze Leaf Award, and the Second Prize of the 2020 Wu Wenjun Artificial Intelligence Science and Technology Award.

DownLoad:

DownLoad: